Vermont’s first neighborhood-scale geothermal project is expected to break ground this summer as part of an affordable housing development, providing what developers hope is a blueprint for cost-effective, all-electric new construction in the Green Mountain State and beyond.

“We are decarbonizing and providing the natural energy of the earth to heat and cool our buildings,” said Amy Demetrowitz, chief operating officer of Champlain Housing Trust, one of the nonprofit developers behind the project. “The model is as awesome and as simple as that.”

Across the country, states with ambitious climate goals are looking for ways to cut emissions by weaning their buildings off natural gas and oil heat. Geothermal loops have emerged as a promising solution. These systems use emissions-free electric heat pumps to transfer thermal energy into and out of the earth, and deliver it to multiple households — not unlike pipes carrying water to homes across a neighborhood.

In 2024, utility Eversource launched a geothermal network in Framingham, Massachusetts, that includes some 140 retrofitted buildings; an expansion that will double the network’s size is in development. Work is underway in New Haven, Connecticut, on a geothermal system that will serve the city’s historic train station as well as about 1,000 units of public housing planned nearby.

The Vermont project is smaller; it will heat and cool 36 units at the Riggs Meadow development in the northern town of Hinesburg. An additional eight units and an on-site childcare center will have air-source heat pumps.

The geothermal project will have 12 to 16 boreholes drilled as far as 400 feet into the ground, where the temperature is a steady 45 to 50 degrees Fahrenheit year-round. In the cold weather, liquid pumped down these narrow wells will pick up heat from the earth and deliver it to the buildings above. In hot weather, the process will be reversed, with the system cooling the buildings by transferring heat back into the ground.

Champlain Housing Trust and Evernorth — another affordable housing developer partnering on the project — will foot the bill for the interior equipment, while utility Vermont Gas Systems will pay for and own the in-ground infrastructure, covering an estimated $275,000 in up-front costs that could be hard for a nonprofit like the trust to manage. Champlain Housing Trust, which covers utilities for tenants, will pay Vermont Gas a monthly “geothermal access fee” of $25 to $35 per unit to offset this spending.

“It’s not going to be wildly profitable for us, but it’s going to be a valuable learning experience as we figure out how we’re going to grow this over time,” said Neale Lunderville, president and CEO of Vermont Gas.

The plan took root in 2022 when Jan Blomstrann, former chair and CEO of Hinesburg-based renewable energy firm NRG Systems, donated 46 acres of land to Champlain Housing Trust for affordable housing development, specifying that she wanted the project to use renewable energy. The organization was already working toward decarbonizing its developments, so the request was a natural fit, Demetrowitz said.

At the time, Vermont Gas was considering ways to expand its offerings and keep its business strong as the future of natural gas becomes more uncertain in the face of climate regulations and shifting consumer demand. Currently, Vermont households rely heavily on fossil fuels to stay warm, but the state has a mandate to reduce greenhouse gas emissions by 80% by 2050 from 1990 levels. Decarbonizing home heating is a major element of the state climate plan.

With all that in mind, the utility in 2022 launched a program to sell or lease air-source heat pumps to customers. Geothermal seemed like an obvious next step, building on the company’s existing strengths, including managing long-term investments and installing and managing underground infrastructure.

“We’re really good at providing thermal energy services,” said Morgan Hood, director of product management for Vermont Gas. “There’s a lot of commonality with geothermal.”

While other projects, like the one in Framingham, have retrofitted existing neighborhoods to use geothermal, Vermont’s more dispersed population offers few places where enough households are close enough together for such an effort. Vermont Gas, therefore, set its sights on new construction.

Vermont Gas received a federal grant to study the feasibility of using a geothermal network at the Riggs Meadow development and to design the system. A second grant through the same program was expected to help pay for construction, but the Trump administration froze the funds, putting the project in limbo.

Instead of giving up, the team adapted. The original plan was for a geothermal network, a system that manages the diverse thermal needs of its different members: For example, the heat extracted by cooling a neighborhood ice rink might be used to warm an adjacent apartment building.

That initial scheme would have allowed Vermont Gas to learn valuable lessons about designing and managing a geothermal network, but the housing development didn’t actually require that level of complexity — generally, all the units would need either heating at the same time or cooling at the same time. This uniformity allowed Vermont Gas to shift to a simplified, lower-cost plan: Four buildings will each be served by their own geothermal loop. The company also decided not to pursue federal tax credits, as the cost of complying with the eligibility requirements would have outweighed the benefit.

“We had to pivot,” Hood said. “We needed to cut costs so we could still charge the customer base an acceptable amount.”

The partners hope this system, which is expected to be completed within a year, proves cost-effective enough to reproduce in future developments. As Vermont attempts to address housing shortages, geothermal systems could keep down both emissions and residents’ energy bills. But the approach has promise beyond local borders, Lunderville said.

“There are a lot of places across the country where we could replicate something just like this,” he said.

A correction was made on April 14, 2026: This story originally misstated how the geothermal access fee would be paid to Vermont Gas. The Champlain Housing Trust, not individual tenants, will pay the fee to the utility. The story was also updated to include the estimated cost of the project for Vermont Gas.

As of January, California requires developers of new multifamily buildings to ensure that residents with parking have access to EV charging at home. It’s one of the most equitable EV-charging policies in the nation, according to climate advocates.

But in a bid to reduce costs for builders, a state lawmaker introduced a bill in February that would waive those requirements for affordable housing construction until at least 2036.

Most households don’t have EVs yet, but the vehicles are growing in popularity, their costs are falling, and local rebates are making them more affordable. Clean-driving proponents say the current state policy, which requires outlets for EVs to plug into, is crucial to ensure that residents of affordable housing units can easily transition to electric cars and reap the benefits.

“California shouldn’t drop back,” said Linda Hutchins-Knowles, co-leader of the nonprofit National Charging Access Coalition. “We have the most expensive cost of living in the country. We need to reduce costs for residents of apartments and condos, especially in affordable housing, by giving them access to the lowest-cost charging for the lowest-cost vehicles, which are used EVs.”

In California, where gas is nearly $6 a gallon, EVs are taking off. They made up nearly one-fifth of new cars sold in the last quarter of 2025. Even given the state’s high electricity prices, EVs can cut the cost of driving in half. And drivers benefit most when they can charge at home: It’s both more convenient and cheaper than using public chargers.

Now, affordable housing developers must install one EV-charging outlet per residence with parking that can provide low-power Level 2 EV charging (20 amps, 240 volts). These outlets deliver a charging speed that’s in between what you’d get from a full Level 2 charging outlet (40 amps, 240 volts) and a standard 120-volt outlet.

Assembly Bill 2748, sponsored by Democratic Assembly Member Sharon Quirk-Silva, would instead allow developers to follow the weaker 2022 building code, which doesn’t require any EV-charging infrastructure for up to 60% of parking spaces. Quirk-Silva did not respond to multiple requests for comment on the bill, which will be heard in committee on April 22.

The state has required new single-family homes, duplexes, and town houses to be built with an outlet for EV charging since the 2016 code. The latest code update “finally extended that courtesy to people who live in apartments,” said Sean Armstrong, managing principal of Redwood Energy, a design firm specializing in net-zero, all-electric affordable housing development.

If passed, AB 2748 could affect millions of Californians who move into affordable housing units constructed in the next decade. By 2030 alone, the state aims to build an additional 1 million units for low-income households.

The California Council for Affordable Housing, an industry trade group that supports waiving the EV-charging requirement, says the bill is necessary to ease economic pressure on developers. “Without this exemption, affordable housing projects, already operating within razor‑thin financial margins, would face substantial and unnecessary cost burdens,” the group wrote in a Feb. 25 post.

The EV-charging requirement does increase project costs — by about $1,000 to $2,500 per unit, said Armstrong, who has consulted on hundreds of housing projects. But these expenses add just 0.2% to 0.5% to the total project cost, he noted.

Adding EV-charging outlets after construction is challenging, as it requires digging up concrete, trenching, laying down conduit, and other changes, Hutchins-Knowles said. Plus, retrofits can be several times the cost of up-front installations, according to Peninsula Clean Energy, a public power agency in the San Francisco Bay Area.

The new bill is the latest example of the brewing tension between California’s pro-electrification building standards and its efforts to ease the housing crisis.

Last June, lawmakers passed a housing reform law meant to spur supply. As part of that policy, the state will skip the 2028 building code cycle, ceding the chance to push developers further toward fossil fuel–free buildings. Some legislators said the move would make housing more affordable. But climate advocates said there’s little evidence to back up that claim.

Debate over what services to install in low-income buildings stretches back even further.

Until the mid-1900s, building developers across the country often constructed housing without complete plumbing, including running hot water. People living in these cold-water flats had to heat water on wood- and coal-burning stoves for bathing, cooking, and cleaning. But cities and states eventually decided that hot water was a basic necessity, not a luxury only wealthier homes should have.

“It was a deep inequity that was fixed by building codes,” Hutchins-Knowles said. “No one argues that to make affordable housing less expensive, we should exempt them from providing hot water.”

“Everyone deserves charging at their house,” said Marc Geller, board member of EV-advocacy organization Plug In America.

Hutchins-Knowles predicts that higher gas prices will drive a surge of interest in EVs. As in the past, legislators need to take the long view for low-income renters, she said. “We shouldn’t block out the people who can least afford to pay more for transportation.”

RICHLAND COUNTY, Ohio — In a mostly rural stretch of Ohio nestled between Cleveland and Columbus, residents now have a rare opportunity: They get to vote directly on the future of renewable energy in their area.

Last July, Richland County banned large-scale wind and solar projects in 11 of its 18 townships. The decision not only caught many locals by surprise; it also struck them as bad for economic development and as encroaching on individual property rights.

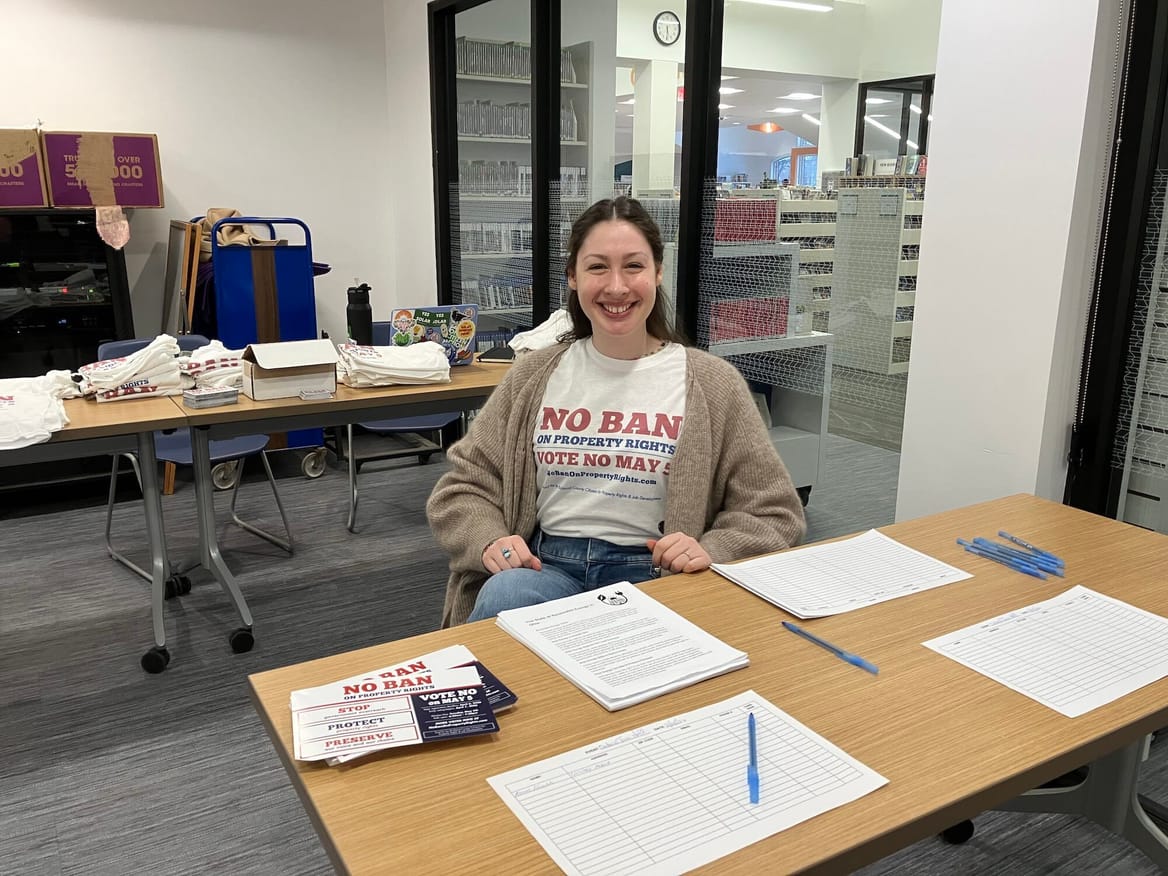

Almost immediately after the county’s three commissioners made their decision, dozens of residents formed a group, called the Richland County Citizens for Property Rights and Job Development, to fight what they saw as an unjust restriction on renewable energy.

Their initial goal was clear but daunting: Collect thousands of in-person signatures within 30 days in order to put the clean energy ban on the ballot during the 2026 primary election. They succeeded.

Before early voting opened last week, the group held several town halls and spent months educating and canvassing voters. Now, their efforts face the final test. By May 5 at 7:30 p.m., every voter in Richland County will be able to weigh in on the question: Should the county keep its ban on most solar and wind farms — or scrap it and give clean energy a chance to be part of the area’s energy mix?

A majority of “yes” votes on the referendum will mean the ban remains. A majority of “no” votes will overturn it. The referendum comes as local restrictions on solar and wind energy have proliferated nationwide, rising by 16% from June 2024 to June 2025. More than 450 counties and municipalities across 44 states now severely limit whether renewables can be built, according to the Sabin Center for Climate Change Law at Columbia University.

In recent years, these rules have been a stumbling block for renewable energy projects, which are needed both to decarbonize the energy system and to meet the nation’s soaring electricity demand. New solar and wind are also among the cheapest forms of energy — a crucial distinction as utility bills rise nationwide.

Restrictions on renewable energy are especially common in rural areas, where the vast majority of the nation’s utility-scale solar and wind projects are located.

Ohio, in particular, is a hot spot for efforts to stymie renewable energy. A 2021 state law, Senate Bill 52, gave counties the right to ban new large solar farms and wind farms of 5 megawatts and up. Roughly three dozen counties now have such restrictions in one or more of their townships.

The Richland County Citizens for Property Rights and Job Development and its supporters would like to see their county removed from that list.

The group reflects the composition of Richland County, with a range of ages, income levels, and professions; many members hadn’t known each other or worked together before last summer. And while some are concerned about climate change and air pollution, the group’s main arguments — evidenced by its name — echo familiar American issues: property rights and job creation.

“I just don’t think it’s right for the county commissioners to tell other property owners that they can’t do what they want with their land,” said Emily Adams, the group’s treasurer. “I have what I want on my roof. And I think farmers and landowners should be able to do what they want with their property, too.”

The effort to overturn Richland County’s ban could empower other communities to push back on similar restrictions, said Shayna Fritz, executive director of the Ohio Conservative Energy Forum, which favors an all-of-the-above energy policy.

“If you gather enough people and you really voice your concerns to them, you have a chance to walk it back,” Fritz said. “This does not have to be permanent.”

Coalition member Brian McPeek, who is the group’s deputy treasurer and also the business manager for the International Brotherhood of Electrical Workers Local 688, hopes that’s the case. Union workers stand to get jobs from both renewable energy projects and from other businesses that may move nearby to take advantage of their clean energy.

“I think it’s very important for the nation to see what we’re doing here,” said McPeek, who was among the dozens of local citizens who attended and spoke out at the Richland County Board of Commissioners’ meeting last July, when it voted in favor of the restrictions. “I feel like it kind of flipped the blueprint for what others can do if their commissioners do the same thing. We needn’t close off the county for development.”

Richland County’s ban originated in Sharon Township, an area of approximately 9,000 people in the northwestern part of the county.

In January 2025, the township’s zoning board members requested that the commissioners impose a ban there. The following month, the commissioners asked all 18 townships in Richland County if they also wanted to prohibit renewables. (The county’s authority under SB 52 doesn’t extend to its nearly half dozen villages and cities.)

More specifically, the commissioners sent a fill-in-the-blanks resolution to ban solar and wind development to the township trustees. Trustees simply had to add names and dates and put marks on a few lines to sign on to the restriction.

Eleven townships’ trustees ultimately sent back filled-out resolutions asking the board of county commissioners to institute a blanket prohibition in their townships.

So, “that’s exactly what we did,” Commissioner Darrell Banks said.

The three county commissioners did not consult with the general public during this time, according to opponents of the ban. Few people knew their township trustees had even considered the issue until last summer, when it appeared on the agenda of the July 17 commissioners’ meeting.

Dozens opposing the ban showed up to that meeting, held on a weekday morning, to speak out. Still, the commissioners voted unanimously to adopt the ban for those 11 townships. Rose Feagin, a council member for the city of Ontario who opposes the ban, expressed disappointment with the way the commissioners went about the process.

“Other avenues would have been a better way to get input from people, and from across the board, not just a couple of people in a bubble or in a boardroom somewhere making decisions for other people’s lives,” Feagin said.

Under SB 52, county-level bans on renewable energy can be challenged via referendum — so long as enough local residents support a ballot measure. But the law gives groups only 30 days to get enough signatures on petitions.

By the Aug. 18, 2025, deadline, the coalition had managed to collect thousands of signatures, and on Sept. 3 the Richland County Board of Elections ruled that they had cleared the threshold required to put it on the ballot.

It’s only the second time a county-level restriction on renewable energy has been challenged via referendum under SB 52.

In 2022, Crawford County commissioners blocked Apex Clean Energy from developing the 300-MW project Honey Creek Wind. A field manager for the company then helped lead the campaign to put it before voters, but ultimately that referendum failed.

At this time, no company is looking to develop a large solar or wind project in Richland County, noted Nolan Rutschilling, managing director of energy policy for the Ohio Environmental Council.

So, the Richland County ballot measure isn’t spearheaded by a company looking to profit from a particular project. Rather, it’s the work of citizens who want to preserve possibilities for the future — and restore the right to consider opportunities on a case-by-case basis.

In the lead-up to the election, the Richland County Citizens for Property Rights and Job Development has been using a slogan meant to win over their neighbors: “No Ban on Property Rights.”

Dan Fletcher, a Madison Township trustee who isn’t actively involved in the referendum campaign, said he knows how he plans to vote: “Taking the rights away from the property owner? That’s wrong in my opinion.”

Richland County is a farming powerhouse. More than 120,000 acres of cropland stretch across nearly 500 square miles. Farmers here mostly grow soybeans and corn, and to a lesser degree, forage, wheat, and other crops. The county also ranks among the top fifth of the nation’s leading producers of poultry, livestock, and other animal products.

The region’s agricultural character is the main focus of the campaign to keep the ban in place, run by a group named Richland Farmland Preservation.

The group’s website calls for farmland preservation and “commonsense limits” on solar and wind. It also includes a badge of endorsement from the Richland County Republican Party, which might go a long way in a county that went heavily for Trump in the last presidential election.

Banks, the county commissioner, is on the advisory committee for Richland Farmland Preservation. Other members include Richland County Prosecutor Jodie Schumacher and a trustee from each of the townships of Sharon, Blooming Grove, and Jefferson.

The group may have links to The Empowerment Alliance, a nationwide pro–natural gas organization that has been an impetus behind bills and resolutions labeling the fossil fuel as “green energy.”

A filing with the Richland County Board of Elections identifies the treasurer for Richland Farmland Preservation as Dustin McIntyre, with an address for a building with several offices in Bellville. But VoterRecords.com does not note any Dustin McIntyre in Richland County, nor does Whitepages.com show him living there.

Federal Elections Committee data does list a Dustin McIntyre with an address in Virginia as treasurer for multiple super PACs, including the Affordable Energy Fund PAC. That group was set up by The Empowerment Alliance in 2021.

The alliance began as a project of former Ariel Corp. chair Karen Buchwald Wright and her husband, Tom Rastin, who was also an executive there. Headquartered in Mount Vernon, Ohio, Ariel makes compressors for the oil and gas industry.

The Richland Farmland Preservation website also features anti–renewable energy talking points espoused by The Empowerment Alliance and other groups, including a variation of a graphic used by The Empowerment Alliance that implies gas-fired power plants should be favored over solar because of their smaller land footprint. (The illustration ignores the large swaths of land needed for drilling and pipelines, as well as pollution.)

Neither McIntyre nor Richland Farmland Preservation responded to Canary Media’s emails or calls.

The No Ban on Property Rights campaign held a fundraiser in February, and its volunteers have been distributing lawn signs, door hangers, and brochures. Volunteers with the nonprofit Ohio Citizen Action have also been helping with efforts to raise awareness and get out the vote.

As to whether the Richland Farmland Preservation group was mobilizing in a similar way, Banks told Canary Media he didn’t expect it to hold a general fundraiser. Instead, he noted that they planned to “call a few people.” Without saying who, he said, “There’s some people who will put some money towards this.”

Nonetheless, the push to preserve the renewable energy ban is tapping into real anxieties about ceding land to non-farming uses.

“We’re seeing more and more farmlands being used up for developments, and we want to keep them as farmlands,” said John Jaholnycky, who previously worked for natural gas and electric companies and is now a trustee for Mifflin Township, which opted for the ban.

In Jaholnycky’s view, solar should go on buildings and over parking lots. “I think it’s kind of shortsighted that we want to use up all of this farmland to put these solar panels up.”

Richland County Commissioner Cliff Mears pointed out that the city of Mansfield plans to add a solar farm at the site of a former landfill. But he added, “We feel that farmland overall should remain farmland.”

Still, blocking renewables won’t necessarily preserve farmland. In fact, urban and suburban development has been the major threat over the past several decades.

From 2002 through 2022, Ohio lost over 930,000 acres of farmland. Researchers at The Ohio State University reported last year that most of that loss occurred around metropolitan areas, where urban and suburban sprawl was extending into formerly rural areas. The number of acres for certified and planned utility-scale solar projects, meanwhile, is about one-tenth that amount.

Data centers are also a growing concern, with roughly 200 already in the state, and plans for another 100 or so.

For farmers, leasing their land for renewable energy can supplement income and actually let them keep the land in their families.

“The alternative is that [landowners] will sell it for development or data centers or something,” said Annette McCormick, a county resident and opponent of the prohibition.

Nor are renewables necessarily incompatible with farmland preservation.

Agrivoltaics uses land under and around solar panels for grazing sheep or growing forage or other crops. “There’s a lot of opportunities for farming” amid clean energy installations, McCormick said. “Maybe just not think about corn and soybeans all the time” as the only farming options.

Permit restrictions also generally require renewable energy companies to restore agricultural land when projects finish using it.

Both Banks and Mears criticized SB 52’s provision that lets all voters in the county — not just those in the relevant townships — sign a referendum petition and then vote on the issue. “It has nothing to do with anybody in the cities or villages,” Mears said. In his view, voters “should have some skin in the game.”

That arrangement was once on the table. An earlier version of SB 52 would have given each township the authority to ban solar and wind and then left any decisions on referendums solely up to its own voters. Ultimately, however, the law put the decision to enact prohibitions — and the rights of voters to seek their reversal — at the county level.

“Every voter in Richland County should have a voice on this important issue because it’s a countywide policy,” said Jen Miller, executive director of the League of Women Voters of Ohio, who grew up in Richland County. Although the commissioners chose to defer to trustees in individual townships, “it is the role of county commissioners to represent every voter and to hear from every voter.”

Former Richland County Commissioner Gary Utt agreed: “It’s a county issue. Let the people decide.”

Energy costs are also a big issue this year, not just in Richland County but across the state. Utility bills are rising for all customers as electricity demand surges in Ohio, especially with the proliferation of data centers and growth in electrification. Solar power can come onto the grid faster than other sources. Adding more generation quickly could ease the supply crunch, and clean energy could help protect residents from the volatility of fossil fuel prices.

“That affects all of us — not just countywide, but statewide also,” said Christina O’Millian, a volunteer who worked on last year’s campaign to get the issue on the ballot.

Because SB 52’s hurdles apply only to solar and wind farms, it’s “picking winners and losers in what should be a free market,” said Fritz of the Ohio Conservative Energy Forum.

For McPeek, the electrical union business manager, blocking renewables also means fewer jobs for himself and other IBEW members throughout the county.

“Historically, communities that sort of close themselves off often see investment and innovation going elsewhere,” he said.

Even if residents defeat the ban, it doesn’t mean that any large solar or wind projects will be built in Richland County.

“It just restores the right of a project to be considered,” McPeek said. “There are a lot of hurdles that they have to jump through.”

In unincorporated areas without any ban, SB 52 still lets county commissioners review almost all new large-scale solar and wind farms of 5 MW or more before developers can even file a permit application with the Ohio Power Siting Board.

The law gives commissioners 90 days in which they can prohibit a project, change its footprint, or do nothing. No action means a company can then file its application with the siting board, provided the developer also complied with additional notice and public meeting requirements.

If a company does get to file an application for a solar or wind farm with the siting board, SB 52 then calls for two ad hoc representatives of counties and townships where the development would be located. Those individuals take part in the case as voting members. Any project also must satisfy a long list of other requirements before the siting board grants its approval to move ahead.

Even for projects that have otherwise met all legal criteria, the siting board sometimes simply defers to local government opposition to conclude they are not in the “public interest” — a stance that is currently under review by the Ohio Supreme Court.

Ultimately, it may take a repeal of SB 52 and some other legal changes to put all types of energy generation on an equal footing when it comes to siting and permitting.

But for now, advocates for a “no” vote on Richland County’s ballot issue are focused on what they can most immediately control: defeating a ban that makes solar and wind a nonstarter from the get-go.

“I want to make my children proud,” said Morgan Carroll, a Shelby resident who urges people to vote no. “I want to say that we tried to help them with their energy costs in the future, help the future of clean energy in the county.”

We’re glad you read this story. If you appreciate our independent, paywall-free reporting, please consider making a tax-deductible contribution in celebration of Canary Media turning 5! All donations are currently being matched.

After taking a beating for the first year of the Trump administration, the beleaguered wind energy industry may finally see a glimmer of hope.

President Donald Trump and Interior Department chief Doug Burgum have spent months in an all-out assault against the technology, and in particular against offshore wind projects in federal waters. They have frozen all new leases, repealed clean energy tax credits, and even paid off an oil company to not build a planned wind project. The most dramatic move came in December, when Burgum paused work on five under-construction wind farms on “national security” grounds.

The developers of these five projects — two off the Massachusetts coastline, two south of Long Island, and one off the coast of Virginia — sued over the stop-work orders, and a series of federal judges soon issued injunctions against the Interior Department’s interventions.

Burgum had vowed to fight back, but last week, the department quietly let the final deadline for appealing the courts’ decisions lapse. The move means construction of the nation’s first five major wind farms along the eastern seaboard can continue absent a change in the case. When complete, the wind farms will generate enough electricity to power well over 2 million homes.

The lack of appeals likely represents a recognition that the government couldn’t stop the five projects from moving forward, said Tony Irish, who served as an Interior Department lawyer for decades before leaving in 2025.

“If the actual reason behind the stop-work orders was legitimately founded in national security, I would be very surprised by the lack of appeal,” he said. “So I think the lack of appeal is telling in that regard.”

Developers of the five major wind projects haven’t wasted time, with several of the projects already producing power. Revolution Wind, a project from Danish company Ørsted, delivered its first electricity to the New England grid in mid-March. Coastal Virginia Offshore Wind, a project from the Virginia utility Dominion, is about 70 percent complete and also delivered its first electricity last month. The farthest-along project, Vineyard Wind, produced a massive amount of electricity earlier this year during Winter Storm Fern when other power resources were offline.

The lack of appeals could be good news for future wind projects as well. A bipartisan group of senators has been debating a long-delayed “permitting reform” bill for months. The bill would speed up environmental review for critical energy projects, make it easier to build interstate transmission lines, and protect clean energy permits from federal interventions like those of the Trump administration. (It would also likely afford the same protections to oil and gas projects such as the Keystone XL pipeline, which President Joe Biden scrapped after taking office in 2021.)

Those bipartisan talks broke down after Burgum’s stop-work order. Senator Sheldon Whitehouse, a Democrat from Rhode Island who is leading the talks, told congressional Republicans and the Trump administration that they would only resume if the Interior Department declined to appeal the court injunctions for the offshore wind projects. Senate Democrats are also hoping to see Burgum advance solar projects on federal lands.

A potential thaw on offshore wind might benefit the president as he tries to manage the fallout from the Iran war, which has sent gasoline prices soaring and contributed to fears of an energy shortage around the world. The White House’s “energy dominance council” has begun participating in the congressional permitting talks.

“There’s a confluence of market realities that make this a particularly hopeful year for us,” said Chris Phalen, vice president of domestic policy at the National Association of Manufacturers, in an interview with Bloomberg Government. Proponents of permitting legislation stressed that the next few months before the midterm election season are pivotal for achieving a deal.

A broader set of reforms to the National Environmental Policy Act, the nation’s bedrock environmental permitting law, would be controversial, but research shows that it might accelerate the deployment of onshore wind energy: A recent survey of around 50 renewable developers found that around 80% of them had selected a project site so as to avoid the federal environmental permitting process.

Other developers reported that reviews for historical artifacts and endangered species can add months or years to project timelines, and that the reviews may have held up at least 11 gigawatts of energy, or enough to power almost 5 million homes. A reform effort, likely modeled on the House-passed “SPEED Act,” would aim to shorten review timelines and limit litigation. (The environmental review for the five in-progress offshore wind projects took multiple years, even under the wind-friendly Biden administration.)

“Bipartisan permitting reform is the next critical step,” said Liz Burdock, the CEO of the Oceantic Network, a trade group that advocates for offshore wind. She added that ease of permitting could enable millions more homes’ worth of new wind development, but warned that “without a predictable path to build, manufacturers, shipyards, and skilled workers are forced to sit idle, creating gaps that raise costs and delay benefits for millions of ratepayers.”

It’s not an easy moment for renewable energy in the U.S., but the sector is still setting new records.

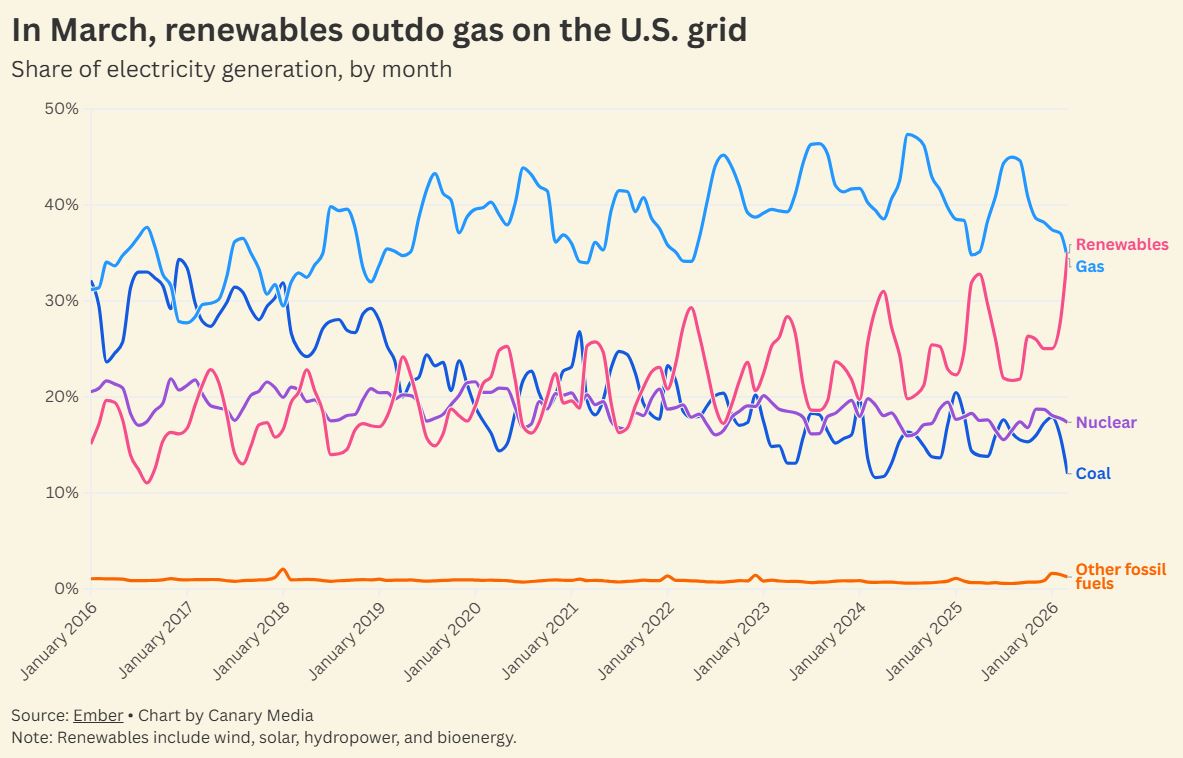

Just look at what happened last month: Over the course of March, the nation got more electricity from renewables than it did from natural gas, which is typically the single-largest source of energy on the U.S. grid.

It’s the first time renewables have bested the fossil fuel in the U.S. across an entire month, per data pulled from the think tank Ember. Meanwhile, emissions-free sources, a category that includes both renewables and nuclear, produced more than half of the nation’s electricity. It’s just the third time that’s happened across an entire month, the first instance being last March.

Sure, renewables only beat gas across a short time frame. And, yes, March is the start of the spring shoulder season, when electricity demand falls a bit from its winter highs and renewables tend to outperform.

But it’s a major milestone despite these caveats. Just five years ago, the gap between gas and even the best months for renewables was yawning. Since then, that gap has narrowed, thanks in large part to the rapid expansion of solar and the steady growth of wind power. Hydropower, bioenergy, and other sources of renewable energy have seen their combined share of electricity production slowly decline over the same time period.

Renewables have crossed this threshold amid serious political pushback. The Trump administration has relentlessly attacked the sector — especially wind — over the last year and change. Its policy shifts are likely to result in fewer new solar and wind farms over the medium term, but in the short term, they haven’t really derailed the growth of clean energy. In fact, March was the best-ever month for wind in terms of electricity output.

But perhaps more impressive is that renewables are growing their market share while overall electricity demand climbs. Put simply, clean energy is taking a bigger slice of a growing pie.

Gas power plants, for their part, remain difficult to build due to supply chain bottlenecks. Meanwhile, solar, batteries, and wind together will once again make up the overwhelming majority of new energy capacity added to the grid this year. The same was true last year. And the year before. And the year before that…

Even as the Trump administration creates obstacles to building renewables, a key pair of facts will hold: The U.S. needs more electricity, and renewables are the easiest way to get it. In other words, don’t expect this to be the last month in which renewables conquer gas.

There’s nothing like a common enemy to bring people together. This midterm election year, that enemy may be data centers.

As AI grows more powerful and more popular, tech companies are rushing to build facilities that house all that computing capability — and to secure tons of power to run them. But no one knows exactly how many of those data centers will get constructed, and how much electricity they’ll need. That’s a problem for utility customers, who may be saddled with the costs and climate impacts of an unnecessary gas power and grid infrastructure buildout.

Some states are tackling the problem with what are known as large-load tariffs: essentially, special rates and requirements that force big power users to shoulder the costs of grid buildouts. But this week, a small city in Wisconsin put its foot down. Port Washington, a suburb of Milwaukee, voted by a roughly 2-to-1 margin to require that city leaders get voter approval before awarding tax breaks to data centers and other large development projects. It’s a clear response to the $15 billion OpenAI and Oracle megaproject that’s being built in the city, though this newly approved measure comes too late to affect that project.

At the federal level, Democrats have spearheaded most of the campaigns against data centers, with Sen. Bernie Sanders (I-Vt.) and Rep. Alexandria Ocasio-Cortez (D-N.Y.) even proposing a nationwide moratorium. But Port Washington is part of Ozaukee County, which has voted for Republicans over the past 20-plus years of elections.

Also this week, residents in Festus, Missouri, voted to oust every incumbent on their City Council, in large part because the decision-makers had approved a controversial $6 billion data center in the area. Jefferson County, where the city is situated, voted overwhelmingly for Republicans in the 2024 elections.

Indianapolis, meanwhile, saw a more violent reaction: Someone fired over a dozen bullets at City Council member Ron Gibson’s home on Monday, and left a note reading “No Data Centers” on the legislator’s doorstep. Gibson is a Democrat who has publicly supported a data center project in the city.

Across the U.S., more data center questions are on the ballot. Residents in Monterey Park, California, will determine in June whether to completely ban construction of the facilities. In the fall, Boulder City, Nevada, will vote on whether a municipally owned plot should host a data center, and residents in Janesville, Wisconsin, will decide whether to add more hurdles to a project turning a former General Motors plant into a data center.

At least 11 states are also considering legislation that would pause new data center construction, with a bill in Maine likely to be the first to become law.

City-level data center restrictions like Port Washington’s certainly don’t have the heft of state-level bans or even a nationwide moratorium, as far-fetched as its passage may be. But they do show that data center opposition is on the rise in every nook and cranny of the country — and it may have a massive influence on more than just local elections this November.

Clean Energy Team dominates Arizona utility election

Arizona voters this week selected a slate of candidates known as the Clean Energy Team to run the state’s largest public utility, the Salt River Project.

The winners may have Turning Point USA, the Charlie Kirk–founded conservative organization, to thank. The SRP delivers power and water to more than 1 million customers in the Phoenix area, and its leaders are chosen through an unusual election in which the number of acres a customer owns determines how many votes they can cast. These races typically don’t get much turnout, but this year, Turning Point stepped in to endorse candidates who supported converting retiring coal plants to gas. That prompted clean energy advocates, including the Sierra Club and actor and advocate Jane Fonda, to get involved.

All that attention definitely juiced turnout: This year’s election saw four times as many ballots as 2024’s, The New York Times reports. Two Turning Point–backed candidates did win races for SRP’s board presidency and vice presidency. But candidates who support clean energy swept the remaining races to take majority control of the board and double their representation on SRP’s advisory council.

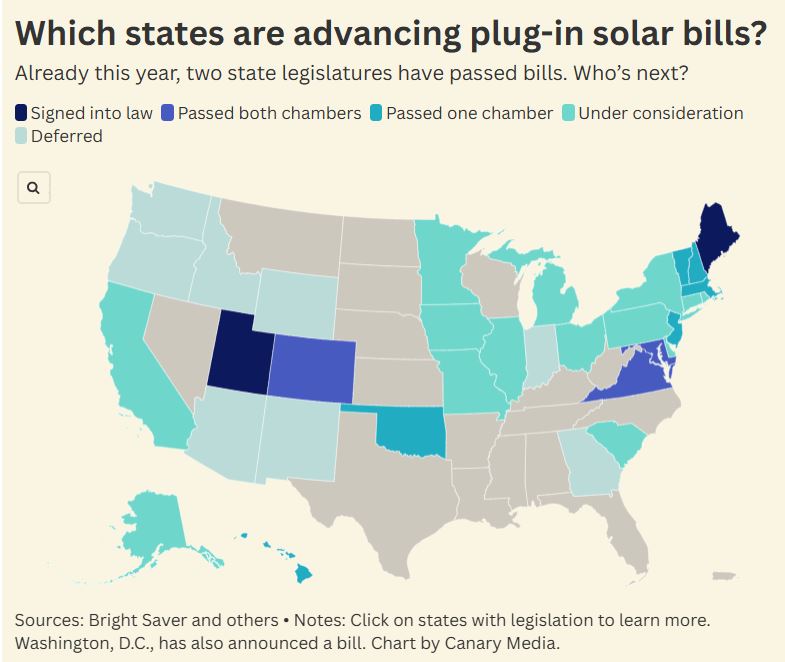

Here’s where balcony solar is taking off

You’ve probably noticed that electricity bills are on the rise. If only curbing them were as easy as buying a portable solar panel and plugging it into your wall to generate your own clean power.

In two states, it pretty much is. This week, Maine joined Utah to become the second state to legalize the use of “balcony solar” panels, which can be plugged into standard outlets to help offset homeowners’ power usage. Other states may soon join them: Canary Media’s Sarah Shemkus has put together a map showing where bills to legalize balcony solar are in the works, with some needing only a governor’s signature to become law.

Whale, whale, whale: The Trump administration struck down Endangered Species Act protections for whales to unleash oil and gas development in the Gulf of Mexico — even though it has used unfounded claims about offshore wind’s risks to the creatures as an excuse to undermine that industry. (Canary Media)

Ceasefire’s energy impacts: Oil prices have fallen since the announcement of a two-week ceasefire between the U.S. and Iran, which analysts say may quickly translate into gasoline price relief for Americans. (The Hill, Axios)

New Jersey goes nuclear: New Jersey lifts its de facto ban on nuclear power construction, becoming the sixth state to reauthorize reactor development in the past decade and the second, after Illinois, to do so this year. (Canary Media)

Winds of change: The U.S. Interior Department quietly fails to appeal court decisions that allowed work to restart on offshore wind projects halted by the administration, an omission that experts say could be an early sign of hope for the industry. (Grist)

Digging clean heat: A geothermal heat pump system that’s heating and cooling a church just outside New York City could pave the way for similar projects in dense urban areas. (Inside Climate News)

Solar soars: A federal report shows rooftop solar now accounts for 20% of Puerto Rico’s energy production, surpassing natural gas to become the island’s second-largest generation source, behind petroleum liquids. (Utility Dive)

Renewables reign: In March, renewables generated more power than gas across a whole month, marking a first for the U.S. grid. (Canary Media)

Fervo Energy, the leading next-generation geothermal startup, is ramping up plans to build out new power plants.

The Houston-based company has signed a three-year binding agreement with Turboden America, which will supply 1.75 gigawatts of organic Rankine cycle turbine capacity for Fervo’s forthcoming geothermal projects in the United States. The startup will use the equipment to convert heat pulled from deep underground into carbon-free electricity for data centers and the grid.

Fervo, which is reportedly preparing for an IPO, is currently building the first 100 megawatts of its 500-MW Cape Station in Beaver County, Utah. The project, which will be the world’s largest enhanced geothermal system, is slated to start producing power later this year.

Turboden America is already supplying over half of Cape Station’s total turbine capacity. The company, a subsidiary of the Italian manufacturer Turboden, says it will expand its U.S. operations to fulfill the deal, which calls for nearly three dozen 50-MW power-plant units.

The agreement, announced Tuesday, sheds more light on Fervo’s development plans beyond Cape Station, which broke ground about two and half years ago.

Fervo declined to share specific details about where and when it intends to deploy the new units. However, the company has “multiple projects in various stages of progress” and is pursuing “multi-year, multi-gigawatt offtake partnerships with both utilities and hyperscalers,” Sarah Jewett, Fervo’s senior vice president of strategy, told Canary Media in an email.

She added that the Cape Station site has an estimated 4.3 GW of capacity potential, based on internal and independent estimates. Fervo is also developing an enhanced geothermal system in Nevada, called Corsac Station, which is set to supply 115 MW of electricity to Google and the utility NV Energy.

This week’s development with Turboden “helps streamline project execution and accelerate deployment as our project pipeline advances,” Jewett said.

Together, Cape Station and the new turbines represent over 2.2 GW in geothermal power capacity. If completed and brought online, that amount would be equal to more than 50% of the current installed capacity of U.S. geothermal plants — which provide less than 1% of the country’s total electricity generation. Virtually all those existing plants rely on conventional hydrothermal resources, such as geysers and hot springs.

“Geothermal energy will be essential in stabilizing a strained power grid with clean, firm energy, and Fervo has shown strong leadership in advancing the sector,” Paolo Bertuzzi, president of Turboden America and CEO of Turboden, said in a statement. “With this announcement, we are prepared to scale delivery in the U.S. market and add megawatts of new generation wherever and however they are required.”

In signing the deal, Fervo and Turboden are aiming to avoid a potential bottleneck that threatens to slow the larger buildout of next-generation geothermal: the power-plant supply chain.

Today, the global market for organic Rankine cycle systems, heat exchangers, and other components is concentrated among a small set of manufacturers based in Israel, Turkey, and parts of Europe. Until very recently, those companies had little reason to scale production or revamp designs, given the sector’s limited growth. Most geothermal equipment is highly customized, and it can take over 18 months to bring it stateside.

“The ORC market has always been a very niche market and quite stable in the past,” Bertuzzi told Canary Media in an earlier interview.

But recent U.S. innovations in geothermal technology are making it possible to harness Earth’s heat from a wider range of places than conventional geothermal plants can reach. For instance, Fervo’s Cape Station uses horizontal drilling techniques and fiber-optic sensing tools to fracture hard, impermeable rocks and create artificial reservoirs. The startups Sage Geosystems and Quaise Energy are taking a similar approach, while companies like Rodatherm Energy and XGS Energy are building novel closed-loop systems deep underground.

Turboden, which is owned by Mitsubishi Heavy Industries, said it can presently deliver about 20 of its 50-MW turbine units per year. Nearly half of its global business is from the geothermal industry. The rest is from biomass-burning power plants as well as industrial facilities that use waste heat to generate electricity, such as data centers and gas-compressor stations.

The manufacturer is now set to scale production in both Italy and the United States in order to meet the growing demand from next-generation geothermal developers like Fervo. In an email, Turboden said it is adopting “multiple business and procurement models … to ensure larger volumes and faster delivery times, including domestic content to support tax credit mechanisms for American customers.”

A community north of San Diego has blocked a major grid battery that a developer had hoped to build in a residential area near a major hospital.

Independent power producer AES Corp. withdrew its application to develop the Seguro battery system in Escondido, 30 miles from San Diego. The company had intended to fill a former horse ranch with 320 megawatts of battery containers, which would have been one of the most powerful stand-alone energy storage facilities in the country. The facility would have strengthened the Southern California grid late in the day, when solar generation fades and home consumption surges, pushing the state forward on its quest to produce 100% clean electricity by 2045.

But the development ran into a barrage of local opposition, as residents decried adding large-scale power infrastructure so close to homes and 1,600 feet from Palomar Medical Center Escondido, especially given the multiday, high-octane fires at other large batteries in the state. The outcome was a high-profile win for local battery opponents, and a warning sign to developers in famously pro-battery California.

AES said in a statement that it would prioritize other development efforts, but remained “committed to advancing projects that can provide the safe, reliable, and affordable power needed to strengthen the region’s electric grid and generate meaningful economic benefits locally.”

JP Theberge, a board member of the Elfin Forest Harmony Grove Town Council who rallied resistance to the battery, framed his neighbors as a victorious David smiting a corporate Goliath.

“The forces of greed are very powerful in this world. The only way to stop them is to be united and determined and forceful,” he wrote in a post on the Stop Seguro Facebook group. “This was a great win and we should all be proud of our efforts.”

AES is, to be sure, a profit-driven company, but the vast majority of U.S. energy infrastructure is built and operated by for-profit entities. What AES hoped to profit from was delivering a large amount of emissions-free grid capacity at a time when California desperately needs more of it — and when the nation as a whole is grasping for more power.

Opponents also called out the risks of large agglomerations of lithium-ion batteries, which have in certain circumstances gone into “thermal runaway,” when cells heat up and kick off ferocious blazes that spread to all the batteries within reach.

Escondido has experience with batteries. AES built a large-scale lithium-ion battery in 2017 that utility San Diego Gas & Electric owns and operates on its substation property. One battery container at that site caught fire in 2024 and prompted local evacuation orders that were lifted two days later. Two other California batteries loom even larger in the minds of the Stop Seguro advocates: An Otay Mesa battery, also in San Diego County, caught fire and then smoldered for 17 days in 2024, destroying one section of a larger facility. A Moss Landing project had a string of small fires, followed by catastrophic one in January 2025, which forced the evacuation of the surrounding community.

Crucially, those two fires involved older models of battery cells, and a now-outdated design that packed them into buildings, which was like piling dry timber ahead of lighting a spark. Since then, battery cell technology has improved, and the industry has abandoned storing batteries in large buildings in favor of compartmentalized containers spread across a site. These designs are tested to make sure that a fire in one unit cannot reach surrounding units. Thus, a really bad failure could burn up one container but not produce the kind of dayslong conflagration seen in the highest-profile battery fires.

That’s where separating fact from fearmongering can get tricky. Otay Mesa and Moss Landing were approved by the necessary authorities, and then combusted in catastrophic fashion. Any community would be justified in wanting to prevent something like that from happening. At the same time, the physical causes of those massive blazes simply don’t exist in the battery projects that are getting built today.

Even so, Seguro would have pushed the boundaries in terms of proximity to homes and to the hospital. If one container started smoking, that could be enough to force the hospital’s patients and staff to either evacuate or shelter in place. This fact became pertinent to the case, because AES needed the hospital to sign off on running high-voltage wires through its property, which the hospital board voted down in 2024.

Generally speaking, though, recent battery failures have not put a stop to new battery construction.

Elsewhere in California, developer Arevon broke ground this year on a 250-megawatt battery in Daly City, just south of San Francisco. The battery fires in other parts of the state did not stop the company from securing permits and easements to connect to a nearby substation. That project will inject $73 million dollars into the local tax base, and give San Francisco a major new source of on-demand power to carry it through heat waves and other moments of stress for the grid.

Indeed, batteries continue to set records for participation in California’s energy system. On a given spring day, they show up in force in the evening hours, displacing expensive, more polluting gas plants by shifting the day’s solar production to meet the hours of highest demand. On March 29 at 7 p.m., for instance, batteries delivered a record 44% of total electricity demand, with more than 12 gigawatts injected into the grid.

A clarification was made on April 9, 2026: This story was updated to clarify that the battery AES built at the utility substation has been owned and operated by San Diego Gas & Electric since 2017.

Xcel Energy in Minnesota is poised to become the first utility in the nation to build and operate its own virtual power plant.

For the past six months, fans and foes have debated the novel plan, which will see Xcel deploy hundreds of megawatts of small-scale batteries at customer sites across its territory. The Minnesota Public Utilities Commission ultimately approved a version of Xcel’s plan last week.

Under the new program, known as Capacity*Connect, Xcel will spend up to $430 million to deploy up to 200 megawatts of batteries, in 1-megawatt to 3-megawatt increments, over the next two years. It’s a rare arrangement: Almost every other virtual power plant program in the U.S. is organized around third-party companies, like solar and battery vendors or specialized “aggregators,” that tap into energy resources installed and owned by customers.

VPPs, which aggregate distributed energy resources to mimic the output of a traditional power plant, are seen as a key way to get more energy onto the existing grid. By using customer-owned energy resources or small-scale batteries, VPPs can help utilities reduce the need to build or dispatch expensive power plants.

But utilities have been slow to embrace VPPs. In particular, they’ve struggled to use VPPs to avoid grid investments, which have become a key driver of rising electricity costs. Utilities are leery of relying on technologies in customers’ homes instead of equipment they control. And utilities earn guaranteed profits for investments in their grids, giving them an incentive to resist examining cheaper alternatives.

Supporters of Xcel’s VPP program say it could finally provide a durable model for utilities to use distributed energy resources to defer costly grid investments and to more fully utilize the existing grid.

For one, the structure gives Xcel an economic incentive to recoup its investment. But more important, it requires Xcel to establish a metric to assess the value that distributed energy resources bring to the grid — something utilities have historically struggled to measure. If Xcel can create a template, then it will have removed a major stumbling block for broad adoption of VPPs.

“Putting a value on DERs of different types and capabilities to avoid or defer distribution upgrades is a real opportunity — and it’s really hard,” said Will Kenworthy, Midwest regulatory director for the nonprofit Vote Solar. “Xcel has said, ‘We need to put a value on this.’ And the way this program is set up, they have an interest in getting that right in a way they never have before.”

That’s not to say supporters think Xcel’s Capacity*Connect program should be the only VPP option in Minnesota. Many, including Vote Solar, have pushed for the utility to allow third-party companies to participate in the program. Some have expressed disappointment that the commission failed to do so, and there’s still no way for solar installers, battery vendors, and demand-response aggregators to enlist their own customers to help the grid in Xcel’s Minnesota territory.

And plenty of industry groups were outright opposed to the commission’s decision last week. The Minnesota Solar Energy Industries Association, Solar Energy Industries Association, and Coalition for Community Solar Access all criticized the plan and the lack of a third-party program.

As Andrew Linhares, Midwest director of state affairs at the Solar Energy Industries Association, said in a statement, “Competitive markets for energy storage deployment ensure that ratepayers get the best, most affordable deal possible. The Capacity*Connect program takes the exact opposite approach.”

The genesis of Xcel’s Capacity*Connect program is a bit unusual.

It didn’t originate in a broader policy push for VPPs but instead came out of Xcel’s integrated distribution planning. Minnesota’s Public Utilities Commission created that regulatory structure in 2018 with the goal of getting investor-owned utilities to “maintain and enhance the safety, security, reliability, and resilience of the electricity grid, at fair and reasonable costs.” Integrating DERs into the grid is one way to do just that.

But integrating DERs into utility planning processes is a whole new territory. Utilities, Xcel included, have not factored these technologies into how they plan out and spend money on their power grids. This means VPPs can’t yet specifically help offset distribution grid investments.

Instead, almost all existing VPPs target reducing peak electricity demand across utilities’ or grid operators’ entire service territories, as “bulk system” assets, Kenworthy said. That can — and does — save money by replacing the energy that would otherwise come from costly “peaker” power plants. That’s helpful, but it’s solving a different problem than distribution grid costs.

Using batteries and other DERs to relieve local grid constraints is a lot more technically challenging than relying on them to shave power demand during peaks. Utilities need to know exactly what stresses are happening at individual substations and distribution grid circuits from minute to minute. And they need far more confidence that the DERs will respond reliably and consistently to relieve those constraints in order to prevent overloads or blackouts.

Beyond a handful of pilot projects in California, Connecticut, Massachusetts, and New York, very few utilities have begun to experiment with using customer-sited DERs to relieve these kinds of pinpoint grid challenges. “We don’t have a way to do third-party substations,” Kenworthy said.

Xcel Energy spokesperson Kevin Coss said that the utility will work with local businesses, commercial and industrial sites, and nonprofits to install batteries “at strategic locations on the grid” to begin to test how each battery can mitigate local grid constraints. “These batteries will help meet increasing demand for electricity, maintain reliable service for our customers, maximize the efficiency of existing infrastructure, and support local jobs.”

Xcel Energy’s plan for paying for those batteries blurs the distinction between bulk-system and distribution-level values, as the utility’s batteries will serve both functions.

Xcel’s Capacity*Connect batteries will earn revenues for the bulk-system energy and capacity services they provide for the Midcontinent Independent System Operator (MISO), the entity that manages the transmission grid and wholesale energy markets across Minnesota and all or part of 14 other Midwestern states.

Those revenues will allow Xcel to pay back almost the entire cost of deploying the batteries, said Will Nissen, director of policy at the Minnesota-based Center for Energy and Environment, a nonprofit that’s in favor of the program. The utility has estimated that the batteries’ deployment and associated software development to manage them will add from 67 cents to $1.50 per year to a typical residential customer’s utility bill through 2030.

That’s key to the longer-term vision of using these batteries to avoid grid investment. Xcel has said it can’t start calculating the distribution-grid value of its batteries until it has had a few years to study them — the MISO revenues will fund this research.

Capacity*Connect will also get a $50 million investment from Google, as part of the tech giant’s broader deal with the utility to cover the energy needs of its new data center in the state.

“The beauty of this pilot is [that] it pays for itself with MISO revenues, while we learn about all the potential distribution value,” Nissen said. “It’s getting those bulk-system benefits while also studying how to use the distribution system as efficiently as possible.”

The commission ordered Xcel to establish specific estimates of the benefits that its batteries could provide to its grid by November 2027, when its next integrated grid plan is due, Kenworthy said. The commission also instructed Xcel to provide quarterly progress reports.

Vote Solar hopes that these provisions will drive the utility to “put a number on what avoided or deferred distribution investment is worth,” he said. “And we can take that to other forums where we’re trying to value DER and say, ‘This is what this device is worth, if we can do that thing.’”

Not all the stakeholders who have weighed in on the Capacity*Connect proceedings are as confident in that outcome, however.

“We really see this decision as a missed opportunity,” said Shannon Anderson, policy director at the nonprofit Solar United Neighbors, which is part of a coalition sponsoring VPP legislation in multiple states. “There’s no reason you can’t do ‘both and’ here — do what Xcel proposed and do a behind-the-meter VPP program.”

In fact, Public Service Co. of Colorado, Xcel Energy’s utility in that state, is currently rolling out a VPP program that will pay third-party companies that equip customers with batteries, smart thermostats, smart water heaters, smart heat pumps, and EV chargers, Anderson said.

In last week’s decision, the commission did order Xcel to report on how its experience in Colorado could apply to its work in Minnesota. But the commission declined to take up a proposal from a group of stakeholders that wanted it to order the utility to lay out a plan for doing something similar.

Nor does it seem likely that Minnesota’s legislature will order the commission to push Xcel to create a VPP program in the near future, Anderson said. Efforts to pass a VPP bill faltered last year, and similar legislation introduced this year has not passed out of a key committee, she said.

But VPP proponents are not giving up, Anderson said. “There are a number of filings coming, a lot of paperwork to evaluate and justify the program,” she said. “This is just the beginning of the conversation from our perspective.”

As a key deadline for federal solar tax credits ticks closer, a Massachusetts program is helping the state’s nonprofits get solar projects underway before the incentive disappears.

The Solar Upgrading Nonprofits, or SUN, program provides nonprofits with financial and technical assistance to evaluate options for solar installations and seek out additional funding if they choose to go forward. The first round, in 2025, worked with 23 organizations. Five have decided to move forward with installations that total 1.5 megawatts of installed capacity — double the goal for that phase of the program.

The stakes are even higher for SUN’s second round, which kicked off at the end of March. President Donald Trump’s One Big Beautiful Bill, signed last summer, put an expiration date on tax credits that can shave 30% to 50% off the cost of commercial-scale solar projects: They must start construction by July 4, 2026, or be placed in service by the end of 2027 to qualify — a tight timeline for most organizations.

“There is still time, but it is dwindling very quickly,” said Rachel Gentile, marketing and communications manager for Resonant Energy, the Boston-based solar company spearheading the initiative with funding from the Massachusetts Clean Energy Center, a quasi-public economic development agency.

Adding to the urgency is the fact that nonprofits have had relatively little time to take advantage of the tax credit. While for-profit businesses have been able to claim the incentive for some 20 years, nonprofits became eligible only with the passage of the Inflation Reduction Act in 2022. So, the elimination of the credit means it is disappearing before many organizations have even had a chance to dig into the possibilities.

“Nonprofits that have other missions that they’re dedicated to, it’s not necessarily on their list of priorities,” said Sanne Wright, community partnerships manager for Resonant Energy. “They’re already usually pretty strapped for cash and tight on capacity.”

The SUN program tries to overcome this barrier by making it easier for nonprofits to evaluate their options. The idea is modeled on another Resonant program, Solar Technical Assistance Retrofit, or STAR, which provides similar support to affordable housing providers looking to add solar to their buildings. Since its launch in 2021, STAR has installed 4.8 MW of solar capacity, with another 13.5 MW in the pipeline. When nonprofits became eligible for the federal tax credits, Resonant started thinking about how to adapt STAR for a new constituency.

The Massachusetts Clean Energy Center, which also funds STAR alongside the Jampart Charitable Trust, awarded the SUN program $150,000 for its first round and another $150,000 for the latest cycle. Resonant works with two partner agencies — Providers’ Council and the Essex County Community Foundation — to connect with nonprofits that might benefit from the assistance.

“Power is very expensive here,” said Kate Machet, vice president of systems initiatives and government relations for the Essex County Community Foundation. “This program has really allowed us to bring to our nonprofits on the ground the ability to explore solar.”

Interested organizations do a short intake call to discuss their general goals for a solar project. Then they provide information about their electricity bills and roof age and condition, and Resonant completes an analysis of their options. Whether or not the nonprofit decides to move forward, it receives a stipend of between $2,500 and $7,500 as compensation for the staff time that went into the process. Those that opt to go ahead with an installation receive help identifying further funding opportunities and writing grant applications.

Grow Associates, a nonprofit that serves adults with developmental disabilities, applied to the SUN program in hopes of getting solar on the roof of its facility south of Boston. The grant-writing support helped the organization secure a $500,000 award from a state program that funds solar projects for nonprofits working with low-income populations. The money will cover almost the entire cost of the planned 162-kilowatt array.

“Without their help, we would not have been able to get the grant,” said Sarah Palin, Grow’s executive director. “We’re looking at saving about $72,000 a year in electricity costs that we can put back into our programs.”

Resonant is accepting applications for this round of SUN until at least July, but it will continue adding participants after that deadline as long as the funding holds out.

Though Resonant is trying to help as many nonprofits as possible take advantage of the expiring tax credit, organizations that aren’t able to make the deadline could still benefit from participating in SUN and installing solar, Wright said. Finding the money to cover the initial costs will still be a challenge, but the average 300-kilowatt array could still save an organization about $1.3 million over the expected 25-year life of the system, down from $1.6 million with the tax credit, according to Resonant’s calculations.

“Organizations are going to have to get a little more creative about how they fund the project up front,” Wright said.