A narrow complaint to a federal energy commission could have wide implications for the solar industry and the electric grid — both in North Carolina, where it originated, as well as nationwide.

At issue is a unique planning scheme that’s been years in the making. Duke Energy, the state’s predominant utility, is moving to proactively upgrade poles and wires to create room for prospective solar farms. Rather than making improvements pegged to specific projects and then charging solar developers for the full cost, as it did in the past, the company is now building in anticipation of future grid needs and spreading the costs among all customers.

In recent years, state regulators have pushed Duke to take this approach to alleviate grid congestion. The company is thought to be the first utility in the country to address local transmission needs in this way, even though it is far from the only one with a long backlog of projects waiting to plug into the grid.

But one set of Duke customers isn’t happy. North Carolina’s electric member cooperatives, which buy most of their power wholesale from the utility, filed a complaint with the Federal Energy Regulatory Commission in February over four grid projects. They argue that the cost of the upgrades — $57 million, in this case — should not be distributed evenly among all customers. Instead, they want solar developers to pay half the total cost.

Many observers believe the protest is on shaky legal ground. Yet FERC is chaired by an appointee of President Donald Trump, who is known to attack renewable energy regardless of the law. The commission is expected to make a decision by the fall, and if it rules in the co-ops’ favor, experts say the ripple effects could be dire.

For one, the solar projects banking on the four grid upgrades could falter if they are forced to bear millions of dollars in new expenses. A ruling for the plaintiffs could also send Duke back to its old transmission planning method — a strategy criticized as costly, ineffective, and hostile to new solar.

“It would be hugely disruptive to the solar industry, but also to the development of the transmission system in the Carolinas more generally,” said Ben Snowden of Fox Rothschild LLP, an attorney for solar developers who isn’t directly involved in the case. “It would be a huge mess.”

What’s more, a decision for the co-ops could set the stage for federal meddling in local grid planning.

“Better-planned transmission will save ratepayers money while providing a more reliable grid,” said Chris Carmody, executive director of the Carolinas Clean Energy Business Association. “This complaint could establish precedent for expensive slowdowns and federal interference in state decision-making.”

Duke’s current approach to network upgrades arose because the old one was failing.

As North Carolina policymakers passed laws to speed the clean energy transition in the 2000s and 2010s, Duke was flooded with requests from developers looking to bring large-scale solar arrays online.

To accommodate these projects, the utility sometimes had to replace lines, poles, and other infrastructure. Whenever that was the case, Duke sought to charge 100% of those costs directly to solar developers. Some paid up and connected to the grid, but others balked and withdrew or were delayed indefinitely.

“Every project was studied, one after the other, and the first project to trigger an upgrade was assigned the entire cost of that upgrade,” Snowden said, even if the improvement made way for lots of other projects to interconnect, too.

“The part of Duke’s system that was most conducive to solar got to the point where it was — in Duke’s view — pretty much at capacity,” he said. Any new generator — solar or otherwise — that sought to interconnect in that area would be tagged with tens or hundreds of millions of dollars of upgrades. “The queue got clogged, and it was stuck for a couple of years.”

Over time, the logjam contributed to a slowdown in renewables. New large-scale solar installations plummeted in 2022, according to data from the Solar Energy Industries Association, falling to about 200 megawatts from a peak in 2017 of nearly 1.2 gigawatts.

The most congested areas on the grid became known collectively as the “Red Zone.” Duke, developers, and other parties deemed over a dozen projects — to upgrade lines, replace poles, and make other improvements — necessary. But the disrepair endured because no one could pay for them.

Then, in 2022, the North Carolina Utilities Commission began to turn the ship. The commission ruled that Red Zone upgrades were “appropriate” and “reasonable.” The projects would enable over 3.7 gigawatts of solar to connect to the grid, commissioners said, while providing “operation and resiliency benefits.”

Crucially, regulators also laid the groundwork for upgrade costs to be shared by all customers, instead of paid for by developers alone. Finally, the commission noted flaws in Duke’s transmission planning strategy and urged the company to “engage with stakeholders” to improve its process.

The company did just that, workshopping the Red Zone projects with interested parties and setting up a scheme to identify future grid needs that would provide multiple benefits.

“Duke — pulled kicking and screaming — has made pretty big strides on modernizing its transmission planning,” said Nick Guidi, senior attorney at the Southern Environmental Law Center. “Kudos to Duke for adopting that process.”

Duke didn’t respond to a request for comment for this story. But the company told FERC that the four contested upgrades were on the original Red Zone list and had been extensively vetted by a range of parties — including the state’s member cooperatives.

The Red Zone projects, Duke wrote, “were identified through years of collaborative local transmission planning … and selected because they provide broad, system‑wide reliability, resiliency, and economic benefits that far exceed their costs.”

The company also noted the projects will “help reduce overall power costs for all users” and even facilitate new gas generation in which the co-ops have partial ownership.

A spokesperson for the North Carolina Electric Membership Corporation, the association of 25 rural co-ops bringing the challenge against Duke, declined to speak to Canary Media for this story.

The co-ops’ complaint doesn’t make clear why they chose to object to the four improvement projects in question — two in Erwin, halfway between Raleigh and Fayetteville; one in Sanford, in the state’s dead center; and one in Camden, just west of the Outer Banks.

But their protest repeatedly states that the improvements are “proactive solar upgrades” that primarily help solar companies. A follow-up filing dismisses systemwide reliability and other benefits asserted by Duke as a “barrel of red herrings.”

The $57 million that Duke has assigned to customers for the four upgrades is a “simple unfairness,” the complaint says. Customers should bear only half those costs, and the co-ops’ share should be reduced from $802,000 per year to $401,000. The rest, they argue, should be borne by solar developers, the projects’ “primary beneficiaries.”

“That’s a really faulty premise,” Snowden said. “That’s like saying that the water pipes that run down my street are for the benefit of the people who sell me water.”

What’s more, clean energy and consumer advocates say, the proactive nature of the Red Zone projects is a good thing — unlike Duke’s old “Whac-A-Mole” approach — and their price tag is appropriately rolled into the transmission fees the utility charges its customers.

“You have to spread the costs out across the broader grid,” said Guidi of the Southern Environmental Law Center, “because they provide benefits to the broader grid.”

Perhaps the $401,000 in savings would trickle down to the co-ops’ 1 million metered customers, representing 2.8 million North Carolinians. But, Guidi said, “It would be a drop in the bucket.”

The impact could be more acute for solar companies, which tend to operate on thin margins. The extra costs could conceivably cause developers relying on the four upgrades to withdraw, Snowden said. However, he added, “I think the bigger danger is: Do you undermine Duke’s willingness to continue with proactive transmission planning?”

The complaint is the first of its kind, making its outlook murky.

“It’s a very big swing from a legal standpoint,” Snowden said. “There are some very serious questions about the relief that they’re seeking, including whether FERC has the jurisdiction to provide this relief at all.”

The five-member commission still contains three appointees from former President Joe Biden, and Trump’s choice for chair is generally considered qualified and conventional.

But when disputes over renewable energy reach a body even remotely touched by the president, all bets are off.

“They’re trying to identify these four lines as solar lines,” Guidi said. “Whether that’s their belief, or whether they are trying to play to a federal administration generally not friendly to solar, that is seen throughout their complaint.”

Furthermore, the petition clearly signals that more challenges could be on the way to Red Zone improvements, as it calls the four upgrade projects “the tip of an iceberg.”

“This is just the start,” Guidi said. “I don’t think they expect it to end here.”

For decades, utilities have used smart thermostats to reduce strain on the grid when electricity consumption is super-high. Paying customers to let utilities turn down air conditioning on hot summer afternoons or electric heating on cold winter mornings is called demand response, and it’s delivering gigawatts of valuable grid relief today.

Phoenix’s Ahwatukee Foothills neighborhood is served by the utility Salt River Project, an early mover in tapping smart thermostats to reduce pressure on the grid. (Hunter Trick [Trick Hunter], CC BY-SA 4.0 via Wikimedia Commons)

But millions more of these smart thermostats are shifting households’ temperatures on a daily basis — and not on behalf of utilities. Instead, the owners of these devices have agreed to let smart thermostat companies modify their temperature settings to avoid costly peak power rates, or to use more clean energy and less dirty energy.

While this energy shifting has largely been invisible to them, some utilities are now gathering data on how these under-the-radar systems could be leveraged to avoid costly infrastructure upgrades or to burn less fossil fuels. Put simply, the more smart thermostats that utilities can recruit to lower peak demand, the less they have to run dirty power plants and the fewer wires and poles they need to transport electrons.

Big Arizona utility Salt River Project is one early mover on this front. Last year, it worked with smart thermostat firm Renew Home to see how thousands of the company’s thermostat-equipped customers in and around the Phoenix area could reduce strain on the grid. Those thermostats belonged to households that opted into Renew Home’s Energy Shift program, which lets the company automatically adjust their temperature settings throughout the day. Nationwide, about 5 million customers representing 4 gigawatts of capacity have signed on to that initiative.

The tracking effort revealed that customers enrolled in Energy Shift are easing peak grid pressures nearly as effectively as those enrolled in the utility’s smart thermostat demand-response program.

Over the course of six test events last August and September, about 28,500 Energy Shift–enabled homes each delivered about 1.1 kilowatts of peak load reduction on average, for a total of about 27 megawatts, Josh Logan, Salt River Project’s senior product manager, said during a March webinar.

That’s not quite as much energy reduction as the average 1.3 kilowatts per thermostat that Salt River Project gets from the roughly 75,000 customers enrolled in its standard demand-response program, he said. But an additional 27 megawatts of peak relief happening more or less automatically is nothing to sneeze at, he added.

It’s worth pausing to note the trickiness of comparing customer load-reduction programs like Energy Shift to typical utility demand-response initiatives. Utilities and regulators have always thought of demand response as something that happens during emergencies to directly alter how customers would have otherwise used energy. Utilities want to see a direct reduction in energy demand from some typical baseline.

Energy Shift’s frequent tweaks to millions of household thermostats upend those benchmark expectations, said Will Baker, Renew Home’s senior director of market integration. To measure the impact of its test events in Arizona and elsewhere, the company uses randomized control trials that pull data from a broad range of customers to determine a baseline, he said.

The company’s results are prompting Salt River Project to examine the idea of offering Energy Shift customers incentives for expanding how often or deeply they’re willing to shift their energy use. While the utility isn’t disclosing what financial arrangements it might be working out to more reliably tap into those smart thermostats in the future, Logan expected the results would be “extremely cost-effective” for the utility.

Renew Home worked with the company EnergyHub to reveal this particular data to Salt River Project, free of charge. The utility already uses EnergyHub’s online platform to manage its existing demand-response programs, and the smart thermostat data from Renew Home was rolled into the tool to allow an easy viewing experience.

Arizona isn’t the only place where EnergyHub and Renew Home are collaborating to surface the value of what they call “background virtual power plants” — networks of distributed energy resources that operate with no utility management.

During Winter Storm Fern in January, for example, the two companies found that Energy Shift customers reduced load for an unnamed Southeast U.S. utility by 50 megawatts, said Megan Nyquist, EnergyHub’s senior product market manager. That’s about twice as much winter peak reduction as that utility has enrolled in its official smart thermostat demand-response program, she said.

“Utility programs will continue to be a huge part of how [virtual power plants] grow and scale. But they’re not the only source of flexible capacity out there,” Nyquist added.

Last summer, Renew Home reported that it was able to provide 380 megawatts of load reduction over two hours on a hot July afternoon in the territory of PJM Interconnection. PJM faces a cost crisis in meeting its peak demands for the grid it manages for more than 67 million people in 13 states and Washington, D.C.

Tyson Brown, Renew Home’s head of utility partnerships, noted during the March webinar that this achievement came from “only a fraction of the available fleet. If we actually dispatched the entire Energy Shift–enabled fleet in PJM, the impact would have been closer to 800 megawatts.”

One important advantage of Energy Shift’s day-to-day adjustments is that they are generally less disruptive to household comfort than traditional demand-response programs, Brown said. Utilities that ask customers to shiver through the coldest mornings or swelter through the hottest afternoons struggle to keep households enrolled.

“The goal here is for it to really be imperceptible, such that the end user feels as if the thermostat is doing the things that it’s already been doing for them,” he said, noting that customers are always free to cancel their participation if they want to.

Paying consumers to use less energy during times of peak demand can help save all utility customers money in the long run, Baker noted. That’s because utilities pass on the costs of building and operating power plants and grid infrastructure to meet peak loads on to all customers as a portion of their utility rates. Anything that utilities can do to reduce those costs can eventually lead to lower rates across the board.

Renew Home is a member of the Utilize Coalition, a group of companies promoting virtual power plants as a means of reducing rising utility bills. Baker declined to name other utilities that might be considering methods to pay Energy Shift customers for committing to reduce peak energy use. But he did say, “We’re going into our preseason planning with our utilities — and there’s not a single utility we’re not talking with about this.”

Enormous new batteries keep appearing on the grid, making it devilishly tricky to keep track of which is the biggest in a given region. That’s certainly the case in New England, where acute power needs and robust state climate goals are fueling a buildout of big batteries that keep breaking capacity records.

Canary Media recently covered the inauguration of the 175-megawatt Cross Town battery in Gorham, Maine, which was the largest in New England when it began operating in late November. But that trophy has already passed to a 250-megawatt facility in Medway, Massachusetts, southwest of Boston and about 10 miles from the Patriots’ Gillette Stadium.

The Medway battery came online fully Feb. 25, according to developer VC Renewables, a subsidiary of global energy trader Vitol.

“To be fair, I don’t expect Medway to hold that title for very long, either,” said Tom Bitting, managing director at Advantage Capital, which supported the project with a $158 million tax equity deal. “There are other batteries being developed in New England that are bigger, but I think it is all just a sign that we need all of it, and there’s huge demand for it.”

For instance, Jupiter Power, a heavyweight in Texas’ booming grid storage market, is developing the 700-megawatt/2.8-gigawatt-hour Trimount battery plant at a former oil-storage site in Everett, Massachusetts, just north of Boston. Jupiter aims to finish the project in 2028 or 2029. Trimount is slated to be among the largest stand-alone batteries in the whole country — Vistra’s battery in Moss Landing, California, set that record with 750 megawatts/3 gigawatt-hours, before much of that capacity burned up in a disastrous fire.

The wave of battery megaprojects marks a new chapter for the region, which until recently was focused on building small-scale batteries. Massachusetts encouraged this by requiring energy storage alongside many distributed solar projects that received payments through the state’s main solar incentive; this rule led to a buildout of systems in the range of 1 to 5 megawatts.

Bigger batteries started taking off in the late 2010s out West: in California, Arizona, and Nevada, where developers can sign long-term contracts to deliver grid capacity; and in Texas, where they can bid into a uniquely competitive market.

The first three big batteries in New England — Plus Power’s Cranberry Point and Cross Town, as well as Medway, which was previously developed by Eolian — won seven-year contracts in 2021 to provide capacity for the New England grid, but the grid operator subsequently shortened that kind of contract to one year. After that change, developers have struggled with the lack of long-term capacity revenue; they can still charge up when prices are low and sell when they’re high, but that’s an unpredictable revenue stream that financiers might not want to underwrite.

Massachusetts has succeeded in building a robust fleet of small-scale solar — on recent sunny spring days, it has generated close to 50% of the region’s demand. But leaders knew they needed batteries to keep cleaning up the grid in the hours when solar doesn’t produce. So they created a new policy driver for storage investment called the Clean Peak Standard, which officially took effect in 2020.

The rule orders utilities to serve a percentage of their peak-demand hours with clean electricity, and the target grows with each passing year. Companies that use batteries to save solar energy for the evening — when electricity consumption rises as people get home from work and school — earn credits that they can sell to utilities, providing some revenue certainty outside the wholesale market.

The administration of Gov. Maura Healey, a Democrat, views storage as a key lever to improve energy affordability, Undersecretary of Energy Michael Judge said, because it makes better use of existing grid infrastructure to meet peak demand.

“Store all that solar energy that we’re producing in the middle of the day and bring down the cost of operating the system for everyone,” he said. “You don’t have to run these peakers, and you get all the emissions benefits and integration of clean energy benefits, too.”

It took several years for the rule to actually spur batteries in the multihundred-megawatt range, but now that era has begun. Advantage Capital, for example, factored in revenues from the Clean Peak Standard when it analyzed and underwrote the investment in the Medway project, Bitting noted. A total of 725 megawatts of battery storage had qualified for the Clean Peak Standard as of early March, according to state data.

Stand-alone grid battery projects are also bolstered by a federal tax credit that can cut investment costs by 30%, an incentive that the Trump administration preserved in last summer’s budget law even as it slashed support for wind, solar, and electric vehicles.

Clean Peak cash alone doesn’t pay the bills; battery developers still need to make money in the marketplace. Though New England lacks long-term capacity contracts, storage companies in the region have at least two factors working in their favor: some of the nation’s highest electricity prices and growing demand for power.

“It’s very difficult to get additional generation online in an area with high population density, because regardless of what type of power generation you’re building, it requires a lot of space,” Bitting said. Batteries, though, can fit a lot of power into a relatively small footprint, without the smokestacks or pollution that make it hard to build new fossil-fueled plants in populous areas.

Batteries compete directly with gas power plants to serve the peak hours of demand, when prices are highest. That’s especially valuable in New England, where gas supplies are stretched thin between power generation and home heating on the coldest days of the year.

“When it’s cold, the households are going to continue to demand it,” Bitting said. “But if we can ease some of the peak on the utility side, that will provide a relief valve to supply.”

Jupiter Power’s colossal Trimount project will continue New England’s foray into large batteries, with the ability to discharge enough power for roughly 500,000 homes, per the developer. Trimount was the largest of four battery projects selected in December from Massachusetts’ statewide solicitation to bring on more Clean Peak power. Previously, battery owners could sell off their Clean Peak credits on a quarterly or annual basis. The new solicitation was designed to produce “cost-effective” long-term contracts for storage, giving developers more stable revenue to plan around. Furthermore, Healey doubled down on grid storage in a March 16 executive order that calls for another 5 gigawatts installed by 2035.

“That kind of policy signal, combined with the state’s grid reliability challenges and its decarbonization commitments, creates the conditions for investment at scale,” Hans Detweiler, senior director for development at Jupiter, said in an email.

Massachusetts officials also hope to speed development with new permitting rules, which run large battery applications through a state-level body instead of piecemeal local processes. Community members still get to weigh in, but the program has a clear 15-month timeline and allows just a single appeal to the state Supreme Court, to ensure a more timely resolution of conflicts in the permitting process.

The true test of all these policies will be whether the recent megabatteries kick off a trend, or remain bold outliers in the region’s energy system.

After years of negotiations, data centers and other large customers of Georgia Power have finally won a pathway to pay for their own new clean energy projects to be built and connected to the utility’s grid.

The Georgia Public Service Commission approved the utility’s program last week, allowing these companies to identify and commit to paying for solar, battery, and other renewable-energy projects to supply their own power needs.

If it works as planned, the new customer-identified resource, or CIR, program could help prevent data center growth from raising power bills for Georgia Power’s customers at large — and offer a template for other utilities and regulators wrestling with similar issues nationwide.

Georgia Power is planning one of the largest new fossil-fuel buildouts in the country. Over the next five years, the utility wants to build nearly 10 gigawatts of new capacity resources, roughly 60% of which would come from natural gas power plants. The utility says it needs this new capacity to keep the grid running as power-hungry data centers flood into the state.

Those new power plants may be justifiable if the proposed data centers get constructed and keep operating long enough for the utility to recoup the costs through electricity sales. But if the AI bubble deflates, as more and more industry observers fear will happen, then the cost of paying off those utility investments could fall on everyday customers.

Programs like CIR are meant to protect customers at large from that worst-case scenario as well as from upward pressure on utility rates linked to data centers.

Its effectiveness on that front will depend on whether it results in Georgia Power building a smaller amount of electricity infrastructure than it’s currently planning on through its normal processes, thus reducing cost burdens on customers.

But that effectiveness will be hard to measure, given how the program is designed. Under the program, Georgia Power does not have to include any CIR projects in its long-term grid planning. If regulators don’t rectify that and force the utility to incorporate those projects into its plans, it may wind up adding gigawatts of unnecessary power plants in addition to whatever clean energy moves through the new program. Customers at large would foot part of the bill.

This issue will likely come to a head in Georgia Power’s next integrated resource planning process — the sprawling regulatory proceedings aimed at determining how much power, and what mix of resources, a utility needs to develop or maintain to meet its future needs.

Still, the unanimous vote approving CIR indicates that state regulators want Georgia Power to “work with large loads on the system in a way that manages cost shifts and concerns related to affordability,” said Nidhi Thakar, senior vice president for policy at the Corporate Energy Buyers Association (CEBA), the trade group that negotiated with the utility to create the CIR program.

CEBA includes major hyperscalers — like Amazon, Google, Meta, Microsoft, and Oracle — that signed a “ratepayer protection pledge” at the White House last month, promising to limit the risk that their data center expansion plans will increase everyday utility customers’ electricity rates. But most of the actions that could actually fulfill that pledge will rely on efforts from individual states and utilities, energy experts say — such as the CIR program from Georgia and Georgia Power.

“These large customers are willing to put down capital on the front end and take on the risk” to build the clean energy to supply significant portions of their demand, said Katie Southworth, CEBA’s deputy director for market and policy innovation in the South and Southeast. “This program opens up the procurement pathways.”

Starting this summer, large commercial and industrial customers in Georgia Power’s territory can use CIR to seek out and work directly with independent developers of solar, wind, battery, geothermal, and other carbon-free energy projects, Southworth said.

That’s a first for Georgia Power. As with many utilities in the Southeast and Midwest, it is vertically integrated, meaning it has exclusive rights to contract for power plants. The utility already lets big customers subscribe to clean energy projects that Georgia Power selects and contracts with, but it hasn’t previously allowed customers to bring their own specific clean energy projects to the table.

States without vertically integrated utilities let independent power producers contract directly with big customers. Big power users, and tech giants in particular, have taken advantage of this arrangement where available. U.S. corporate clean-energy procurement surpassed 130 gigawatts of new generation capacity between 2014 and 2025, according to the latest CEBA data. That’s roughly 44% of all new generation capacity built in that time, CEBA told Canary Media.

Under the CIR option, these large customers still won’t directly purchase energy from the projects that they have identified. Instead, they will pay Georgia Power a monthly tariff designed to cover the projects’ construction and operating costs, plus a reasonable rate of profit for the projects’ owners, in an arrangement CEBA likens to a “sleeved power purchase agreement.”

Solar and batteries will probably make up the lion’s share of that new CIR capacity, given that more than 20 gigawatts of those resources are being developed and seeking interconnection in Georgia, according to data from the Southern Renewable Energy Association trade group.

Solar and batteries are also the cheapest source of new generation capacity available nationwide, which could drive lower energy costs for the big customers contracting for it and for Georgia Power customers at large. Together, solar and batteries are expected to account for nearly 90% of new energy capacity built nationwide this year.

Under the CIR structure, if the power from these projects is cheaper than the equivalent cost of power generated and delivered by the utility, 75% of the resulting savings will go to participating customers, while 25% will be shared with other Georgia Power customers, Southworth said.

That could help get large-load customers — namely AI data centers — the massive amounts of energy they need without increasing utility rates for customers at large.

Georgia Power’s previous renewable-procurement structures have helped “diversify our generation mix and increase reliability,” Wilson Mallard, the utility’s director of renewable development, told Canary Media in an email. Adding CIR to those existing structures “offers the opportunity for the procurement of additional renewable resources at competitive prices to meet customer needs,” he said. “We expect these projects will provide energy and capacity benefits to the system value for all Georgia Power customers.”

CEBA fought for some key features that made it into the final CIR program approved by Georgia regulators last week.

For one, Georgia Power removed a contentious provision that would have allowed the utility to terminate CIR contracts at any time and without penalty, Southworth said.

Additionally, small commercial and industrial users of power can now band together to collectively achieve the 3-megawatt minimum required to participate, Southworth noted. That could expand options for retail chains, hotels, local businesses, or local governments to secure their own clean energy resources. And customers will be allowed to transfer in and out of those arrangements, which allows for more flexible participation.

But CEBA wasn’t able to secure one feature it had wanted — a way for CIR customers to earn credits for the capacity value of the projects they bring online. Capacity is how utilities measure the impact that power plants, solar and wind farms, batteries, and other resources have on meeting the peak demands on their grid.

Those peak demands are important because they determine how much generation and grid infrastructure that utilities ultimately build. What’s more, large utility customers typically have to pay demand charges, which are based on how much power they use during those handful of hours when electricity use hits its upper limit.

The CIR program’s monthly tariff is an energy-only tariff. That means participating customers won’t be able to reduce their demand charges on the basis of the projects they’ve enabled to be built under CIR — even if that infrastructure helps Georgia Power reduce its peak demand.

But sooner or later, Georgia Power and its regulators will need to consider how to capture the grid value these CIR projects provide.

That’s likely to play out in the utility’s future integrated resource planning, Southworth said. “When we get to the next IRP, I’m confident there will be some resources — solar and storage — that will be brought on” under CIR, Southworth said. “Those will be resources on the Georgia Power system, and through that modeling, they will absolutely show up” as part of the capacity calculations.

The question then will be whether that newly unveiled CIR capacity will alter Georgia Power’s current power plant expansion plans, which were approved in December through a regulatory process that has unfolded largely outside the utility’s standard IRP proceedings. Georgia Power and regulators justified this approach to deal with a massive increase in the utility’s forecasts of how much power and capacity it will need to supply in future years, which have surged from 400 megawatts in 2022 to 6.6 gigawatts in 2023 to 8.5 gigawatts in 2025.

But environmental groups, consumer advocates, and others say Georgia Power’s latest expansion plan for gas power plants and batteries allows the utility to overbuild for a data center boom that may fail to emerge. Georgia Power will be able to earn guaranteed levels of profit on the $16.3 billion in “company-owned projects” in that plan, giving it an incentive to overestimate its power needs.

Last month, a group of environmental and faith groups brought a legal challenge against the decision. “The commission still has to apply its rules to protect its ratepayers from overbuilding,” said Isabella Ariza, a staff attorney with the Sierra Club, one of the groups filing the legal challenge. “And we think those rules are the only protection that ratepayers have at this point.”

Ariza noted that the final version of the CIR program wasn’t yet posted by the Public Service Commission, which limited her ability to discuss how the resources brought online under the program might impact future capacity planning.

Even so, “in future IRPs, Georgia Power would have a hard time theoretically explaining to the commission why the clean resources shouldn’t offset some of the peak demand,” she said. “But we’ll see.”

A correction was made on April 15, 2026: This story originally misstated that Georgia Power has exclusive rights to build and operate generation in its service territory. In fact, the utility also contracts with third-party solar and battery projects under its CARES program.

In western Illinois, ComEd is tapping a rarely used technique to fast-track community solar installations — working with, not against, environmental groups and solar project developers.

For years, utilities have explored the concept of flexible interconnection, in which solar projects are allowed to come online even when, by the books, there’s not enough space on the grid for these arrays. In return, these solar farms must promise to curtail output during the handful of hours each year when their production would overwhelm power lines and substations.

Flexible interconnection is a speedy way to get cheap new solar online without requiring utilities to spend even more on costly grid upgrades, which are a key driver of the nation’s fast-rising utility bills.

But U.S. utilities haven’t made use of the technique at any significant scale — until ComEd got its program off the ground late last year.

Since then, the utility has fast-tracked more than 50 megawatts of community solar projects using flexible interconnection, and more are likely to be approved before federal tax credits sunset in July.

That’s much faster than utilities in other states have been able to move on flexible interconnection, said Samantha Weaver, senior director of interconnection and grid integration policy at the Coalition for Community Solar Access, a trade group representing community solar developers. In fact, ComEd is “leading the country right now,” she said.

ComEd plans to accelerate that work, said Jessie Bauer, the utility’s senior manager of smart grid and innovation. “Our plan was to do 50 megawatts a year, and we’re hitting that cadence,” he said. “We’re proposing in our grid plan to go even faster, and do 100 megawatts a year, and get to 650 megawatts by 2031.”

The utility has previously committed to deploying 240 megawatts of distributed energy capacity by 2030 to meet its requirements under Illinois’ landmark 2021 climate law.

ComEd was able to succeed where other utilities haven’t thanks to a nudge from regulators that spurred it to collaborate with solar developers and environmental groups.

Historically, utilities and solar developers have struggled to establish the basic mutual trust required to move a flexible interconnection program forward, Weaver said. Utilities are often skeptical that solar farms will reliably cut back as promised during those key hours of potential grid overload. Meanwhile, solar developers suspect utilities will force them offline more than is absolutely necessary.

Illinois’ flexible interconnection process didn’t go that way.

Instead, in 2024 ComEd collaborated with environmental groups represented by the consultancy Eclipse on a flexible interconnection plan. Then, the utility worked out mutually agreeable solutions with those groups, solar developers, and the nonprofit collaborative the Charged Initiative, in a series of workshops that resulted in a program design that gave each side enough of what they needed to move ahead.

Both the utility and solar developers had to make some compromises, Weaver said. But that effort bore its first fruit last November, when 27 megawatts of community solar was green-lit in a region where it would have been excluded by traditional processes. Another 25 megawatts of projects were approved in February.

This coordinated approach is now gaining some momentum in Maryland, Massachusetts, New York, and other states where community solar is struggling, said Nikhil Balakumar, Eclipse’s CEO and founder.

“Now, more than ever, especially in this climate, we need unprecedented collaboration,” Balakumar said. We can’t just slog it out and fight and litigate every little thing till the end of time. There has to be a new way forward.”

ComEd’s push into flexible interconnection was less a choice than a necessity.

Since 2016, Illinois has created and expanded programs that offer lucrative incentives to build community solar projects, which are generally limited to no larger than 5 megawatts. Households can subscribe directly to these projects, which often allow them to lock in cheaper, cleaner energy. The state’s programs are explicitly meant to reduce utility rates for low-income customers.

In Illinois, developers have flooded into the programs over the years, snapping up the most suitable land for community solar arrays.

This posed a problem for ComEd: Everyone wanted to build their solar arrays in the same relatively concentrated geographic area — the rural western reaches of its territory — where there simply wasn’t enough space on the grid.

“We quickly saw all that grid capacity evaporate with the community solar being connected,” Bauer said.

In a situation like this, the standard utility playbook is to require community solar developers to shell out for grid improvements. In western Illinois, that would mean multimillion-dollar system upgrades, he said — a cost that few solar developers can afford.

However, the grid actually does have the space to accommodate those solar farms — at least, most of the time.

Distribution grids are built to serve the times when electricity demand is at its highest. These peaks in demand are relatively rare, happening only during a handful of hours per day, or days per year. That means for the vast majority of the year, there’s unused capacity sitting there.

Flexible interconnection takes advantage of this fact — and helps developers and consumers avoid exorbitant grid upgrade costs as a result.

“If you can give up some of your energy during times of system constraints, you can interconnect much more affordably,” Bauer said.

But this is easier said than done. Utilities can’t perfectly predict how often demand will peak. They need flexibility to handle unexpected changes and respond to emergencies. A major storm or flood could knock out an entire substation for months, leaving other parts of the grid straining to supply power until it’s repaired.

That uncertainty constrains utilities from setting guaranteed limits on how often they’ll ask solar projects to curtail their generation. But for solar developers, “projects aren’t financeable if curtailment is unpredictable,” Weaver said. “We need certain details to be able to literally take to the bank.”

To resolve this conundrum, ComEd and solar developers collaborated on a compromise.

Solar developers calculated that they — and their investors — could bear having about 5% of their annual solar production curtailed. They conceded that ComEd couldn’t guarantee it would stick to that curtailment limit. But if the utility was willing to share historical data on how often its grid was likely to face overloads, developers could use that to convince those investors that the risk was worth taking.

That wasn’t the solar industry’s initial ask, Balakumar noted. Solar developers started out asking for “some sort of fund that compensates us if you do go over 5%,’” he said. But ComEd pays for the power it purchases by passing those costs on to its customers — and the prospect of charging customers for power that didn’t actually get onto the grid was a nonstarter for consumer advocates and regulators.

“We went in wanting a guarantee,” Weaver said. “But we came to the understanding that that wasn’t realistic and that we needed to give up a degree of certainty.”

Nor was it easy for ComEd to agree to sharing confidential data on its substations. Bauer said that process was helped along by community solar developers limiting what data they needed and how they would use it.

Already, the real-world data coming in from ComEd’s flexible interconnection projects could allow it to tighten curtailment expectations for future rounds of development, Bauer said. That could make community solar projects more lucrative to financial backers — and given that the alternative was to not be able to build them at all, or to wait for years for utility grid upgrades to plug them in, that’s better than nothing.

Regulated utilities like ComEd earn profits from the investments they make to expand or upgrade their power grids, not from connecting third-party solar projects. If anything, flexible interconnection exposes them to grid instability risks. Meanwhile, sharing data on how efficiently they utilize their grids can weaken the case for investing in moneymaking upgrades.

But in Illinois, policymakers and regulators forced ComEd’s hand.

Under the 2021 Clean Energy Jobs Act, ComEd and fellow utility Ameren Illinois must invest in their grids to improve customer affordability and meet state climate and clean energy goals. In 2023, the Illinois Commerce Commission rejected the initial grid modernization plans filed by ComEd and Ameren Illinois, because of critiques including an absence of commitments to streamline interconnection of distributed energy resources like community solar systems.

That’s when Eclipse started working with the Environmental Law and Policy Center, the Environmental Defense Fund, the Natural Resources Defense Council, the Union of Concerned Scientists, Vote Solar, and other groups to get ComEd to the planning table, Balakumar said. The following year, these groups agreed to a memorandum of understanding with ComEd, which led to the joint plan submitted to regulators in late 2024.

ComEd then set up that workshop series with solar developers and environmental advocates over the course of 2025. That’s where parties hashed out their positions and came up with compromises that they could live with, Weaver said.

“To give credit where credit is due, the utility came with a lot of information and proposals they’d developed in advance for developers,” she said.

That included detailed information on the capabilities — and limits — of the utility’s technologies to make flexible interconnection possible, Bauer said. For example, one solar developer asked for hour-ahead forecasts of when the utility would curtail projects, he said. “We can do that in the future — in fact we plan to,” he said. But if ComEd had been forced to wait until it could warn solar projects that they would be curtailed an hour in advance, “we wouldn’t have launched this year — we would have launched in a year or two.”

ComEd also chose not to immediately incorporate all the different distributed energy resources that state law requires it to eventually handle, he said. “We were deliberate and focused on community solar, because we recognized that those were not only where the need was, but because those are the most technically sophisticated customers.”

The flexible and collaborative approach that ComEd and solar developers have undertaken stands in contrast to some much slower processes in other states. In California, for example, it took nearly four years between regulators ordering utilities to make flexible interconnection possible and finalizing the rules that allow it to happen — and California still hasn’t created a workable community solar program to make use of those rules.

But speed is of the essence as community solar developers rush to start their projects before July. That’s the deadline for achieving “safe harbor” status for earning tax credits set by the massive tax and spending package passed by Republicans in Congress last year. “Because of these changes happening in the tax credits, we realized we needed to move faster,” Bauer said.

Balakumar agreed that “to go from March workshops to a full-blown program in November for a utility is lightning speed.” But regulators and utilities in states with clean-energy and climate goals that haven’t moved as quickly are setting themselves up for even greater costs — and arguments over who’s going to pay for them — once the window for securing federal tax credits has closed, he said.

That’s not to say that other states can’t still learn from Illinois, he said. Take New York and Massachusetts, two states where Eclipse is closely involved in flexible interconnection work.

“We were in workshops in New York with Avangrid and National Grid,” two utilities serving upstate regions with a lot of community solar and grid constraints, Balakumar said. There, solar developers are “talking to banks and thinking about how they can get much more creative.” In January, National Grid filed a proposal to enable flexible interconnection at seven substations, each potentially hosting 30 to 60 megawatts of new projects.

And in Massachusetts, where utilities have struggled for years to connect more community solar projects, Eclipse has been involved in a workshop jointly hosted with a state regulator–created interconnection working group, with the goal of jointly filing flexible interconnection proposals with National Grid and Eversource “as soon as possible this year,” he said.

Those utilities are actively expanding their grids to accommodate more community solar. But flexible interconnection could allow many projects to connect while deferring $239 million in proposed upgrades, Balakumar said in November 2025 testimony in a proceeding reviewing new grid investment proposals.

In March, ComEd engineers came to a Massachusetts flexible interconnection workshop to share their experience, according to Nick Burica, senior director of grid planning and interconnection engineering at community solar developer Nexamp.

Utilities have plenty of reasons to be leery of requests to operate their grids in this new and unfamiliar way, noted Burica, who previously led development of distributed energy engineering for ComEd. But when those kinds of objections arose, ComEd was “in the room,” able to say that “it will provide energy affordability, and you’ll be able to operate your system better,” Burica noted.

“I was so happy to see ComEd come out and champion what can be done with flexible interconnection,” he said. “Getting people together — industry, utilities, and outside consultants — we’re starting to see the fruits of this labor.”

A community north of San Diego has blocked a major grid battery that a developer had hoped to build in a residential area near a major hospital.

Independent power producer AES Corp. withdrew its application to develop the Seguro battery system in Escondido, 30 miles from San Diego. The company had intended to fill a former horse ranch with 320 megawatts of battery containers, which would have been one of the most powerful stand-alone energy storage facilities in the country. The facility would have strengthened the Southern California grid late in the day, when solar generation fades and home consumption surges, pushing the state forward on its quest to produce 100% clean electricity by 2045.

But the development ran into a barrage of local opposition, as residents decried adding large-scale power infrastructure so close to homes and 1,600 feet from Palomar Medical Center Escondido, especially given the multiday, high-octane fires at other large batteries in the state. The outcome was a high-profile win for local battery opponents, and a warning sign to developers in famously pro-battery California.

AES said in a statement that it would prioritize other development efforts, but remained “committed to advancing projects that can provide the safe, reliable, and affordable power needed to strengthen the region’s electric grid and generate meaningful economic benefits locally.”

JP Theberge, a board member of the Elfin Forest Harmony Grove Town Council who rallied resistance to the battery, framed his neighbors as a victorious David smiting a corporate Goliath.

“The forces of greed are very powerful in this world. The only way to stop them is to be united and determined and forceful,” he wrote in a post on the Stop Seguro Facebook group. “This was a great win and we should all be proud of our efforts.”

AES is, to be sure, a profit-driven company, but the vast majority of U.S. energy infrastructure is built and operated by for-profit entities. What AES hoped to profit from was delivering a large amount of emissions-free grid capacity at a time when California desperately needs more of it — and when the nation as a whole is grasping for more power.

Opponents also called out the risks of large agglomerations of lithium-ion batteries, which have in certain circumstances gone into “thermal runaway,” when cells heat up and kick off ferocious blazes that spread to all the batteries within reach.

Escondido has experience with batteries. AES built a large-scale lithium-ion battery in 2017 that utility San Diego Gas & Electric owns and operates on its substation property. One battery container at that site caught fire in 2024 and prompted local evacuation orders that were lifted two days later. Two other California batteries loom even larger in the minds of the Stop Seguro advocates: An Otay Mesa battery, also in San Diego County, caught fire and then smoldered for 17 days in 2024, destroying one section of a larger facility. A Moss Landing project had a string of small fires, followed by catastrophic one in January 2025, which forced the evacuation of the surrounding community.

Crucially, those two fires involved older models of battery cells, and a now-outdated design that packed them into buildings, which was like piling dry timber ahead of lighting a spark. Since then, battery cell technology has improved, and the industry has abandoned storing batteries in large buildings in favor of compartmentalized containers spread across a site. These designs are tested to make sure that a fire in one unit cannot reach surrounding units. Thus, a really bad failure could burn up one container but not produce the kind of dayslong conflagration seen in the highest-profile battery fires.

That’s where separating fact from fearmongering can get tricky. Otay Mesa and Moss Landing were approved by the necessary authorities, and then combusted in catastrophic fashion. Any community would be justified in wanting to prevent something like that from happening. At the same time, the physical causes of those massive blazes simply don’t exist in the battery projects that are getting built today.

Even so, Seguro would have pushed the boundaries in terms of proximity to homes and to the hospital. If one container started smoking, that could be enough to force the hospital’s patients and staff to either evacuate or shelter in place. This fact became pertinent to the case, because AES needed the hospital to sign off on running high-voltage wires through its property, which the hospital board voted down in 2024.

Generally speaking, though, recent battery failures have not put a stop to new battery construction.

Elsewhere in California, developer Arevon broke ground this year on a 250-megawatt battery in Daly City, just south of San Francisco. The battery fires in other parts of the state did not stop the company from securing permits and easements to connect to a nearby substation. That project will inject $73 million dollars into the local tax base, and give San Francisco a major new source of on-demand power to carry it through heat waves and other moments of stress for the grid.

Indeed, batteries continue to set records for participation in California’s energy system. On a given spring day, they show up in force in the evening hours, displacing expensive, more polluting gas plants by shifting the day’s solar production to meet the hours of highest demand. On March 29 at 7 p.m., for instance, batteries delivered a record 44% of total electricity demand, with more than 12 gigawatts injected into the grid.

A clarification was made on April 9, 2026: This story was updated to clarify that the battery AES built at the utility substation has been owned and operated by San Diego Gas & Electric since 2017.

There’s nothing like a common enemy to bring people together. This midterm election year, that enemy may be data centers.

As AI grows more powerful and more popular, tech companies are rushing to build facilities that house all that computing capability — and to secure tons of power to run them. But no one knows exactly how many of those data centers will get constructed, and how much electricity they’ll need. That’s a problem for utility customers, who may be saddled with the costs and climate impacts of an unnecessary gas power and grid infrastructure buildout.

Some states are tackling the problem with what are known as large-load tariffs: essentially, special rates and requirements that force big power users to shoulder the costs of grid buildouts. But this week, a small city in Wisconsin put its foot down. Port Washington, a suburb of Milwaukee, voted by a roughly 2-to-1 margin to require that city leaders get voter approval before awarding tax breaks to data centers and other large development projects. It’s a clear response to the $15 billion OpenAI and Oracle megaproject that’s being built in the city, though this newly approved measure comes too late to affect that project.

At the federal level, Democrats have spearheaded most of the campaigns against data centers, with Sen. Bernie Sanders (I-Vt.) and Rep. Alexandria Ocasio-Cortez (D-N.Y.) even proposing a nationwide moratorium. But Port Washington is part of Ozaukee County, which has voted for Republicans over the past 20-plus years of elections.

Also this week, residents in Festus, Missouri, voted to oust every incumbent on their City Council, in large part because the decision-makers had approved a controversial $6 billion data center in the area. Jefferson County, where the city is situated, voted overwhelmingly for Republicans in the 2024 elections.

Indianapolis, meanwhile, saw a more violent reaction: Someone fired over a dozen bullets at City Council member Ron Gibson’s home on Monday, and left a note reading “No Data Centers” on the legislator’s doorstep. Gibson is a Democrat who has publicly supported a data center project in the city.

Across the U.S., more data center questions are on the ballot. Residents in Monterey Park, California, will determine in June whether to completely ban construction of the facilities. In the fall, Boulder City, Nevada, will vote on whether a municipally owned plot should host a data center, and residents in Janesville, Wisconsin, will decide whether to add more hurdles to a project turning a former General Motors plant into a data center.

At least 11 states are also considering legislation that would pause new data center construction, with a bill in Maine likely to be the first to become law.

City-level data center restrictions like Port Washington’s certainly don’t have the heft of state-level bans or even a nationwide moratorium, as far-fetched as its passage may be. But they do show that data center opposition is on the rise in every nook and cranny of the country — and it may have a massive influence on more than just local elections this November.

Clean Energy Team dominates Arizona utility election

Arizona voters this week selected a slate of candidates known as the Clean Energy Team to run the state’s largest public utility, the Salt River Project.

The winners may have Turning Point USA, the Charlie Kirk–founded conservative organization, to thank. The SRP delivers power and water to more than 1 million customers in the Phoenix area, and its leaders are chosen through an unusual election in which the number of acres a customer owns determines how many votes they can cast. These races typically don’t get much turnout, but this year, Turning Point stepped in to endorse candidates who supported converting retiring coal plants to gas. That prompted clean energy advocates, including the Sierra Club and actor and advocate Jane Fonda, to get involved.

All that attention definitely juiced turnout: This year’s election saw four times as many ballots as 2024’s, The New York Times reports. Two Turning Point–backed candidates did win races for SRP’s board presidency and vice presidency. But candidates who support clean energy swept the remaining races to take majority control of the board and double their representation on SRP’s advisory council.

Here’s where balcony solar is taking off

You’ve probably noticed that electricity bills are on the rise. If only curbing them were as easy as buying a portable solar panel and plugging it into your wall to generate your own clean power.

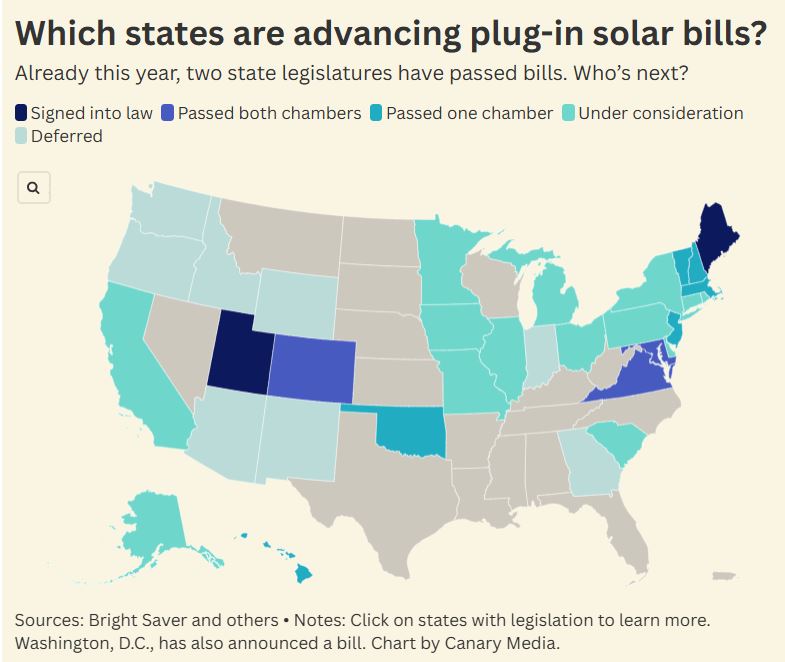

In two states, it pretty much is. This week, Maine joined Utah to become the second state to legalize the use of “balcony solar” panels, which can be plugged into standard outlets to help offset homeowners’ power usage. Other states may soon join them: Canary Media’s Sarah Shemkus has put together a map showing where bills to legalize balcony solar are in the works, with some needing only a governor’s signature to become law.

Whale, whale, whale: The Trump administration struck down Endangered Species Act protections for whales to unleash oil and gas development in the Gulf of Mexico — even though it has used unfounded claims about offshore wind’s risks to the creatures as an excuse to undermine that industry. (Canary Media)

Ceasefire’s energy impacts: Oil prices have fallen since the announcement of a two-week ceasefire between the U.S. and Iran, which analysts say may quickly translate into gasoline price relief for Americans. (The Hill, Axios)

New Jersey goes nuclear: New Jersey lifts its de facto ban on nuclear power construction, becoming the sixth state to reauthorize reactor development in the past decade and the second, after Illinois, to do so this year. (Canary Media)

Winds of change: The U.S. Interior Department quietly fails to appeal court decisions that allowed work to restart on offshore wind projects halted by the administration, an omission that experts say could be an early sign of hope for the industry. (Grist)

Digging clean heat: A geothermal heat pump system that’s heating and cooling a church just outside New York City could pave the way for similar projects in dense urban areas. (Inside Climate News)

Solar soars: A federal report shows rooftop solar now accounts for 20% of Puerto Rico’s energy production, surpassing natural gas to become the island’s second-largest generation source, behind petroleum liquids. (Utility Dive)

Renewables reign: In March, renewables generated more power than gas across a whole month, marking a first for the U.S. grid. (Canary Media)

California lawmakers face a make-or-break choice about the state’s biggest and most successful virtual power plant program: Give it enough money to keep running this summer or scrap it altogether.

The administration of California Gov. Gavin Newsom (D) has proposed ending the four-year-old Demand Side Grid Support program, which pays homes and businesses to send rooftop solar power back to the grid or reduce their energy use during times of peak electricity demand. DSGS has more than 1 gigawatt of capacity, making it one of the biggest VPPs in the country.

The proposal has set off alarm bells for environmental advocates and clean energy companies, which say that eliminating the program would be a costly mistake. And some state lawmakers briefed on the plan have questioned the logic of ending a program that’s successfully delivering grid relief.

DSGS backers argue that the program saves money not only for those who participate but also for all Californians, who face some of the highest utility rates in the country.

A study conducted by consultancy The Brattle Group and commissioned by Sunrun and Tesla Energy, two companies with large numbers of solar-and-battery-equipped customers enrolled in the program, indicates that “DSGS is a significantly lower-cost alternative” to relying on costly fossil gas–fired power plants or other resources available during grid emergencies.

In February, the Newsom administration’s Department of Finance issued two budget proposals regarding DSGS. One proposes ending DSGS, which is administered by the California Energy Commission, and shifting its customers to another program administered by the California Public Utilities Commission — either a current program that has been far less successful to date or one that has yet to be created.

For the past two years, environmental and clean energy groups have been fighting to protect DSGS from a series of funding cuts ordered by the Newsom administration, and have so far been unsuccessful. “California has already invested years of effort and hundreds of millions of dollars to build out DSGS. It’s a model now for clean reliability,” said Laura Deehan, state director of Environment California, one of the dozens of environmental advocacy groups that have signed a letter protesting the plan. “We have to make sure we keep the lights on on the program and not abandon what’s already been built up.”

A coalition of industry groups that have enrolled customers in DSGS echoed that view in a March letter to state lawmakers. It warned that “dissolving an existing successful program and attempting to re-create the same type of program at a different agency causes delays, wastes public resources, and has no assurances that it will be as successful.”

Environmental and industry groups are throwing their weight behind the Newsom administration’s other budget proposal, which would instead increase DSGS funding. This alternative calls for shifting money from another, underfunded distributed energy program to DSGS, bringing its funding for the coming year to roughly $53 million, up from the $26.5 million now remaining in its budget.

This is still short of the $75 million that backers have been asking for, said Caleb Weis, clean energy campaign associate at Environment California. But it should be enough to ensure enrolled customers are ready to help the grid through what’s expected to be a much hotter summer and fall season than the state has seen over the past two years, he said.

“The DSGS program kicks on when the primary alternative would be importing expensive energy from out of state or firing up expensive peaker plants that are dirty and cost money just sitting there, not being used,” he added. Meanwhile, DSGS “has clean assets that are ready to protect the California system during times of extreme stress and high cost. It’s almost a no-brainer to use this.”

Supporters of the proposal to end DSGS have been less vocal. While the state has underscored that DSGS was always meant to be temporary, few other justifications have been offered for ending the program before its original 2030 sunset date — and no major stakeholders have come out in support of that plan.

The conversation around DSGS is heating up ahead of key budget decisions. California must pass its 2026–2027 budget by June 15, and that budget must be finalized before Aug. 31. Sometime between now and that deadline, state lawmakers will be forced to decide on the future of the program.

Lawmakers raised concerns about the proposal to scrap DSGS during a March 5 hearing of the Senate Budget Subcommittee on Resources, Environmental Protection, and Energy at the state capitol.

“DSGS has largely been a successful program,” said Sen. Eloise Gómez Reyes, a Democrat who chairs the subcommittee. “Why is the administration proposing to start over?”

David Evans, a staff finance budget analyst at the state’s Department of Finance, responded that the “original vision and intent of the program was not allowed for it to be an indefinite, ongoing program.” He highlighted the state’s ongoing budget shortfall, which the Newsom administration had cited as the rationale for cutting DSGS funding in 2024 and 2025.

But Gómez Reyes pushed back on that justification, noting that the administration’s alternative proposal — shifting funds from elsewhere — could allow DSGS to successfully operate this year without impacting the budget.

“If something is successful, and it appears that this is a successful program, why don’t we continue … even if we intended it to be something that was temporary?” she said.

Gómez Reyes also questioned the wisdom of shifting DSGS participants to the California Public Utilities Commission, given the agency’s comparative lack of success in managing VPP programs.

Under the CPUC’s oversight, California’s biggest utilities have largely failed to follow through on the state’s decade-old policy imperative to incorporate rooftop solar systems, backup batteries, smart thermostats, and other distributed energy resources into how they manage their grids. California remains well short of current targets on that front.

DSGS has been the most successful of a set of programs created in response to California’s grid emergencies in the years 2020 through 2022 designed to utilize individual customers’ devices to help the grid. Unlike those other programs, which are overseen by the CPUC and administered individually by the state’s three biggest utilities, DSGS is credited for its ease of enrollment, clear rules for participants, and availability to all state residents.

In particular, DSGS has been able to scale up and deliver grid relief much better than the Emergency Load Reduction Program, which the CPUC established in 2021.

Both programs enlist customers with batteries, EV chargers, smart thermostats, and other devices. But according to data provided by legislative staff for the March 5 hearing, while DSGS ended 2025 with an estimated 1,145 megawatts of peak load reduction enrolled — “enough to power the peak electricity demand for all of San Francisco” — ELRP has enrolled only about 190 megawatts. Its residential program was discontinued last year “due to very low cost-effectiveness.”

A recent test of both programs underscored once again the difference in scale. In July 2025, utilities measured how much solar-charged battery power capacity each program provided over the course of two consecutive hours.

The test delivered a total of 539 megawatts of capacity over that time. According to the Brattle Group’s analysis, roughly 476 megawatts of that capacity was provided by about 100,000 participants in the DSGS program — while only 64 megawatts came from ELRP participants.

Utility Pacific Gas & Electric lauded the test, noting that it “showed that home batteries can be counted on during peak demand.”

Sen. Catherine Blakespear, a Democrat, brought up the relatively poor performance of ELRP during the March 5 hearing. “It does seem like there are members of the legislature and stakeholders who really have a lot of confidence in DSGS and want it to continue, and that there’s a concern that ELRP is just not as effective,” she said. “We should focus back on the thing that’s already working and that might have a better chance of being successful.”

CPUC Executive Director Leuwam Tesfai noted at the hearing that ELRP isn’t the only alternative on the table. The budget proposal that would eliminate DSGS would also allow enrolled customers to join a new program administered by the CPUC. The agency has yet to create this new program but is actively exploring it as part of an ongoing proceeding scheduled to wrap up by the end of 2026, she said.

But Gómez Reyes replied that any work the CPUC might or might not undertake to create an alternative program to the ELRP wouldn’t be finished until “after we have completed this budget. And that becomes a problem for us as we make our decisions.”

It’s unclear how quickly state lawmakers and the Newsom administration will move to resolve these conflicts.

“It’s not out of the question that it goes through the end of August,” said Katelyn Roedner Sutter, California senior director at the Environmental Defense Fund, an environmental group that supports DSGS. “I hope it goes faster, because by the end of August is when we need to be drawing on some of these resources.”

Roedner Sutter also highlighted that the DSGS program is funded through taxpayer dollars. Most CPUC-administered programs, by contrast, are financed by authorizing utilities to pass on the costs of operating them to their customers.

“At a time when we’re trying to find ways to pay for these things outside of electricity bills, it makes less sense to move things over to the CPUC,” she said.

Sen. Josh Becker, a Democrat who authored a VPP bill that was vetoed by Newsom last year, told Canary Media that he would “strongly urge the administration to reconsider” ending the DSGS program and shifting its participants to a CPUC program. “[For] those in the legislature that have been focusing on this and care about this, it’s not a move any of us think is in the right direction.”

Becker highlighted that dozens of states are pursuing VPPs to make “better use of the clean energy resources that people already have in their homes to lower cost, to improve reliability, and to reduce pollution.” He has introduced another VPP bill in this legislative session that he said would instruct the CPUC to modify “rules that prevent these resources from participating fully in the market.”

Leah Rubin Shen, managing director at the trade group Advanced Energy United, said its member companies involved in DSGS support eventually shifting to a new program that might emerge from the kind of efforts that Becker and other lawmakers are proposing. But “you’ve got to make sure that everyone knows what the rules are, and that the rules aren’t going to change,” she said.

“DSGS has been a great program,” she said. “Keep it humming along for a few more years, until it’s supposed to be put to bed. And in the meantime, set up this market integration pathway that can funnel what we’ve learned from DSGS into something bigger and better.”

The Cow Palace arena, just south of San Francisco, has hosted Dwight Eisenhower, the Beatles, the San Jose Sharks NHL team, and an annual rodeo since it opened in 1941. But an even bigger act is setting up next door: an enormous battery that will perform a starring role in the Bay Area’s energy ecosystem.

Developer Arevon has begun construction of the Cormorant Energy Storage Project, which will occupy an 11-acre vacant lot just southwest of the Cow Palace in Daly City. The battery facility will be large by industry standards, with 250 megawatts of Tesla Megapack containers, capable of discharging for four hours straight, for 1 gigawatt-hour of total stored energy. Bigger batteries have been built, but when Cormorant comes online in about a year, it will be poised to be the country’s largest battery nestled within a major urban area.

Arevon has contracted the battery for 15 years of use by MCE, one of California’s biggest community choice aggregators — entities that purchase electricity on behalf of local residents as an alternative to Wall Street–owned for-profit utilities. The state requires MCE to buy grid capacity commensurate with its members’ usage, and the Cormorant project will fulfill 10% of this annual requirement, known as resource adequacy in California bureaucratese.

MCE has become a major force in the greater Bay Area: It now serves all of Marin and Napa counties, most of Contra Costa, and half of Solano. The aggregator can contract for power plants across California, but it looks for sites within or near its service territory when possible, said Jenna Tenney, MCE’s director of communications and community engagement.

“Having a storage project in a community is going to add to resiliency in that community,” she said. The battery will bring $73 million of property tax revenue to Daly City, she added, and Arevon will donate $1.5 million in community benefits.

Cities need power, but generating it within urban cores is a difficult feat. California effectively stopped building gas-fired power plants, but even if that were an option, sticking a smokestack in San Francisco wouldn’t fly. These days, California expands generation by building large-scale solar plants in wide-open spaces, but those plants need to ship their power over many miles of transmission lines to reach the cities where it gets consumed.

The Cormorant battery provides something new: a dense source of on-demand power that can slip into the urban fabric without any local air pollution, and which absorbs the far-off solar generation at midday to discharge later at night. Arevon CEO Justin Johnson estimated that the battery, fitting on the site of a former drive-in movie theater, could cover the electricity needs of some 321,000 homes for four hours straight.

“It couldn’t keep the whole city going, but it certainly, without a doubt, increases the reliability of the grid in that area in a substantial way,” he said.

Arevon didn’t jump to the highest echelon of energy storage development from nothing. The firm has invested $11 billion in projects and owns 6 gigawatts of solar and battery installations operating across 18 states.

The company launched in 2021 as a spinout of Capital Dynamics, a private equity fund that amassed an early portfolio of energy storage assets. Arevon is owned by the California State Teachers’ Retirement Fund, Dutch pension fund APG, and the Abu Dhabi Investment Authority. Those firms invest for steady, long-term growth, and their patience lends itself to Arevon building and owning batteries for the long haul, instead of building to flip to other buyers.

“When we’re in there developing assets in the community, we can tell them, hey, we’re going to be here a long time,” said Johnson, who stepped up from COO to CEO in March. “You’re incentivized to engineer it well, construct it well, operate it well.”

Arevon focused on the Daly City location because electricity price volatility tends to be highest in proximity to major consumption, Johnson said. Places like that — whether metro areas or large industrial hubs — see the greatest swings from peak to off-peak hours, and having battery facilities to arbitrage between those times should push prices down in the long run. But building within a city comes with obvious trade-offs.

“Siting any infrastructure, whether you’re putting in a Walmart or upgrading an intersection or doing anything in a high-density area, is tough … especially so for power plants or facilities like this,” he noted.

Tough but not impossible, as Arevon proved in San Diego’s Barrio Logan community with its Peregrine project (another entry in a portfolio of projects sporting avian nomenclature), which came online last year. There, the company squeezed 200 megawatts of batteries between a naval shipyard and a light-rail track, in the shadow of the Coronado Bridge. In Daly City, Arevon will need to carve through roughly a mile of streets to run high-voltage cable underground to the nearest substation.

Such projects “reduce your lifespan a little bit” from the stress, Johnson said, but once built, the intrinsic difficulty becomes a sort of strategic moat. If a competitor wanted to open up next door to Cow Palace, well, they probably couldn’t find a viable space.

“Those are assets I’m really proud to own, and I think they’ll become just more and more valuable over time, because they’re hard to replace,” Johnson said.

To achieve that longevity, the batteries need to survive, and that premise is not to be taken for granted, given their location 90 miles north of Moss Landing, where the largest battery fire combusted a little over a year ago. Safety concerns are understandably higher in dense urban areas, so assuring the community that a Moss Landing–style disaster won’t happen here was integral to securing permits.