The startup Sortera Technologies has raised fresh funding to expand its tech-driven recycling operations — with an eye toward meeting rising U.S. demand for low-carbon aluminum.

Sortera uses advanced sensors and artificial intelligence to sort different types of aluminum found in old car parts and appliances. On Thursday, the company said it raised $45 million to fuel its next phase of growth, including from global investment firm T. Rowe Price Associates, venture capital fund VXI Capital, and Yamaha Motor Ventures, an arm of the Japanese manufacturer.

Sortera’s flagship facility in Markle, Indiana, currently processes about 100 million pounds of shredded metal per year to recover specific alloys — blends of aluminum that contain other elements to make them stronger and more durable. With the new investment, the startup plans to build a second plant next year, in Lebanon, Tennessee, to double its capacity to pick through gleaming scrap heaps.

The expansion comes as the United States is racing to shore up supplies of aluminum.

Part of that is driven by the Trump administration’s increased tariffs on imports of aluminum and steel, which have put pressure on U.S. manufacturers to produce more metal. Automakers are also using more lightweight aluminum instead of steel, including in battery-powered cars and hulking Ford F-150 pickup trucks. Data-center developers need more of the metal for their buildings and the technology inside, while some buyers are looking specifically for lower-carbon aluminum to meet decarbonization goals.

The U.S. is now playing catch-up. America’s production of new, primary aluminum has declined significantly in recent decades, and while plans are underway to build two new smelters, neither is expected to be fully on line this decade. Both projects will also need to secure huge amounts of cheap — and ideally clean — electricity at a time when that’s hard to come by.

Recycling aluminum, on the other hand, requires only about 5% of the energy that’s needed to produce the metal in power-hungry smelters. As a result, it’s generally a faster, cheaper, and lower-carbon way of making aluminum products.

“The domestic market is hungry for sustainable, high-quality recycled aluminum,” said Michael Siemer, Sortera’s CEO.

The country’s use of scrap will climb even higher once two new rolling facilities — which shape aluminum into plates, sheets, and coils — ramp up production. Steel Dynamics rolled its first hot coils at a $1.9 billion plant in Mississippi this summer. Novelis, which is partnering with Sortera to use the startup’s rescued aluminum, is slated to bring its $2.5 billion facility on line in Alabama later next year.

“For them to be green, they each are going to need an additional billion pounds of scrap aluminum,” Siemer estimated.

Despite the growing domestic appetite for aluminum, much of what the country recycles still gets exported overseas, particularly when it’s lumped together with other metals like copper, brass, and titanium. Magnets can easily pull out pieces of steel from scrap piles, but aluminum alloys are tricky to sort. That leaves behind roughly 18 billion pounds of mixed-metal material per year, about 10 billion of which include aluminum alloys, according to Sortera.

“We generally scoop it up, put it into ships, and send it to Southeast Asia,” where the metals are sorted by hand, Siemer said of the industry’s approach. “Or it’s made into low-value products in America, where you can melt the aluminum down” with the other metals, he added, likening the process to melting a box of colorful crayons into a functional, but less desirable, brown soup.

Sortera’s founders, Nalin Kumar and Manual Garcia, launched the company in 2020 to introduce more precision and automation to this sorting process. After spinning out of an Advanced Research Projects Agency–Energy program that focused on recycling metals for lightweight vehicles and aircraft, the startup raised money from firms including Chrysalix Venture Capital and the Bill Gates–affiliated Breakthrough Energy Ventures. Sortera said the funding announced this week brings its total investment to about $120 million.

Other early-stage companies are working on new ways to pluck recyclable materials out of the gobsmacking amounts of garbage we generate every day. Greyparrot, for example, has developed AI camera systems that recycling firms can install to track aluminum cans, glass bottles, and plastic packaging as they move down conveyor belts. The startup Amp uses software-driven robotic systems inside its own plants to automatically sort materials.

But Sortera handles only scrap metal, and it hunts for only specific types of high-quality aluminum alloys — ones that manufacturers like Novelis are typically willing to pay more for. The company’s Indiana facility can also process scrap at high enough volumes to justify handling it domestically, Siemer said.

“They’re getting into a really interesting niche,” said Parker Bovée, who leads waste and recycling research for the consulting firm Cleantech Group. “If you can get pure sorted alloys, then you know exactly what you’re dealing with,” which makes the metal more valuable to the companies turning it back into car frames, engine blocks, or complex metal parts.

Bovée said that from an investment standpoint, he considers Sortera’s approach to be higher risk than a software-only solution, since it involves spending more capital to build facilities and machinery. Waste management in general “is a difficult industry to break into and make substantial inroads,” he said. But Sortera’s ability to capture sought-after alloys “makes them very impressive.”

Siemer added that Sortera will eventually use its technology to sort the other metals found in shredded scrap piles. But for now, he said, “We’re building a business on the aluminum.”

Brookfield Renewable Partners has signed yet another deal to power a tech giant’s data centers with one of its existing hydroelectric plants, heralding a potential lifeline for America’s aging dams.

In its quarterly earnings call with investors this month, Brookfield said it had signed a 20-year contract with Microsoft “at one of our hydro facilities” in the nation’s largest grid system, PJM Interconnection.

The deal is part of a broader agreement, announced last year, to supply Microsoft’s data centers with 10.5 gigawatts of renewable electricity. But it’s the first contract under that framework to support a specific hydroelectric facility. Brookfield declined to disclose which of its dams is part of the deal. Near Lancaster, Pennsylvania, the company operates at least two stations with a combined capacity of nearly 700 megawatts in PJM’s 13-state territory. On the earnings call, Brookfield suggested it may acquire a third plant in the grid system.

The move comes nearly four months after Brookfield signed the biggest deal for hydropower in history: a $3 billion agreement to supply Google’s data centers with up to 3 gigawatts of power for the next two decades.

It also comes at a make-or-break moment for the U.S. hydropower sector, which is one of the few forms of always-on, carbon-free energy available in a country clamoring for clean electrons. Most projects are decades old and will have to undergo relicensing processes over the coming years.

Both of Brookfield’s hydroelectric facilities in Pennsylvania — the 252-megawatt Holtwood Hydroelectric Project, first opened in 1910, and the nearly 418-megawatt Safe Harbor Hydroelectric Project, built in the early 1930s — are up for relicensing in the next five years.

As part of the Google and Microsoft deals, Brookfield said it was able to “upfinance” both facilities, a term that typically describes when private equity companies refinance an existing loan and borrow more money on top of the remaining balance. That could be an indicator that the data center deals are helping Brookfield fund the upgrades and other requirements needed to obtain new operating licenses.

“We continue to evaluate the opportunity to acquire hydro [plants] which would fit well within our portfolio,” Connor Teskey, president of Brookfield Asset Management, said on the earnings call.

Nearly 450 hydroelectric stations totaling more than 16 gigawatts of power-producing capacity are slated for relicensing across the U.S. in over the next decade. That’s roughly 40% of the nonfederal fleet (the government owns about half the country’s hydropower facilities).

The relicensing process for hydropower is uniquely onerous, involving multiple federal, state, and local regulators. Some power plant owners and advocates have accused regulators of using the process to try to squeeze the facilities for additional benefits, such as paying for roads or infrastructure unrelated to a dam itself, which owners say they can’t afford. Faced with relicensing, some stations have simply shuttered, their owners deciding it’s easier to surrender their permits than to make costly upgrades and regional investments needed to win support.

“This is major infrastructure. These facilities cost billions of dollars,” Malcolm Woolf, the National Hydropower Association’s chief executive, previously told Canary Media. “They’re like bridges and roads. They get a license for 50 years. The state agencies view [the relicensing process] as an opportunity to extract concessions from what they view as a deep pocket.”

In the 1970s, he added, “maybe the industry was a deep pocket.”

“But now,” Woolf said, “with the low cost of other fuels like wind and solar and gas, it’s driving these facilities to bankruptcy and to surrender licenses.”

Due north of Chattanooga, a power line runs through a wooded tract called Sale Creek before it dead-ends at the Tennessee River. On Oct. 8, this line lost power. But the lights stayed on for nearly 400 customers because Sale Creek has a new tool to neutralize outages.

Chattanooga’s municipal utility, EPB, had installed a Tesla Megapack battery system on this lonely stretch of the distribution grid back in June. If anything knocked out the line, residents would have 2.5 megawatts/10 megawatt-hours of storage capacity at their disposal while crews fixed the problem.

In this case, utility workers unexpectedly needed to de-energize the line to finish making repairs. EPB was able to switch the neighborhood over to battery power for about half an hour until the job was done. Without the battery, EPB would have had to tell its customers it was cutting off their power on purpose.

“This was the first time we used it in an outage situation,” said Ryan Keel, president of the energy and communications business unit at EPB. “In the future, it’ll be even more unplanned. It’ll be a response to a tree falling through the line or a car hitting a pole or something.”

EPB, which serves some 500,000 people across 600 square miles, plans to roll out more targeted, resilience-oriented batteries to other outage-prone stretches of its grid. The nonprofit public power company currently has a 45-megawatt fleet of batteries, almost all of which were built this year. Besides keeping the lights on, they save money for the whole customer base by lowering the utility’s peak electricity consumption.

The United States is racing toward yet another record year of grid battery construction, as power companies tap lithium-ion batteries to store solar power, improve grid reliability, and free up capacity for new data centers. Most of these batteries are getting installed in California and Texas, where they’ve pushed down wholesale prices and banished heat wave–induced power shortages. Utilities elsewhere, though, too often bide their time in exhaustive studies of the technology, which is new by their standards, despite its mass deployment in some regions.

But batteries are starting to catch on in Tennessee: The Tennessee Valley Authority, the federal entity that generates electricity for EPB and scores of other local power companies, just committed to build 1.5 gigawatts of grid batteries across its territory by the close of 2029, its largest battery deployment by far. The TVA board approved this in its November meeting, setting the stage for the utility to solicit competitive bids from battery developers, spokesperson Scott Fiedler told Canary Media.

And although Chattanooga’s battery buildout is far smaller than what’s happening farther west, or even the installations planned by TVA, it shows how a responsive local utility can adopt new clean-energy technology to make life a little better for its customers. It doesn’t take a massive R&D budget or piles of cash from Wall Street shareholders — just a willingness to embrace a readily available technology.

EPB had explored batteries for years. It researched them with the Department of Energy and Oak Ridge National Laboratory, located 100 miles northeast of Chattanooga. But EPB moved beyond research and installed a solar-and-battery microgrid at the Chattanooga Airport, learning how to work with the technology in practice.

Building on that experience, EPB leaders took a new look at batteries after Winter Storm Elliott rocked the region just before Christmas 2022, leaving TVA short on supply as households cranked their electric heating. For the first time since its founding in 1933, the TVA had to cut power to its customers in order to avoid damaging the grid infrastructure. So it told local power companies that they had to reduce demand by a certain amount.

“That event shaped our strategy,” Keel said. “We want to deploy a large amount [of batteries], because it gives us some local insulation from what may be happening on the TVA system that could impact our customers.”

Homes in TVA’s territory use a lot of electric heating and cooling, which drives grid peaks in both winter and summer. Typical hot summer and cold winter peaks for EPB reach 1,200 megawatts of demand, Keel said, but the utility set a demand record above 1,300 megawatts this January.

That means the current battery fleet meets just a small percentage of the total peak demand — enough to help on the margins, but pretty limited in its impact. Keel said his strategy is to raise that capacity to around 150 megawatts.

“Our hope is that if TVA calls for a 10% required reduction of our load, we can achieve that completely with the battery systems that we’ve put in, and we don’t need to do any unplanned outages to customers at all, like we had to” during Winter Storm Elliott, Keel said.

That battery strategy is akin to an insurance policy, responding to the concerning frequency of polar vortices and extreme heat in recent years. But the batteries don’t just sit around waiting for record cold snaps or heat waves. When the batteries aren’t acting as local backup, EPB puts them to work to save money for all customers.

When EPB buys power from TVA, it pays a demand charge for the hour of highest consumption each month. By discharging the batteries when it looks like a peak hour is approaching, EPB can shave its monthly charge. That lowers the rates it pays to TVA, which puts downward pressure on utility bills for Chattanooga residents.

“We make our decisions based on community benefit,” said J. Ed. Marston, EPB’s vice president for strategic communication. “The more we can keep our costs down operationally, the more we can avoid having to do electric rate increases that impact our customers.”

This dynamic parallels the way Vermont utility Green Mountain Power pays for a program that helps customers install home batteries: The utility dispatches all the small-scale batteries to reduce its peak-demand charges to the New England grid operator.

EPB expects to get payback on its battery installations within five years from the reliability and peak-demand uses. The utility has elected not to run the batteries on a daily basis, because the wear and tear that frequent cycling puts on batteries offsets the benefit of short-term savings on energy charges. (TVA territory doesn’t have wholesale markets that let batteries bid in for various services to make money.)

EPB’s battery buildout puts it ahead of many bigger peers, in both absolute and relative terms.

It’s part of a pattern of the municipal utility embracing new technology to help its residents.

Perhaps most strikingly, the nonprofit installed fiber internet in city homes in 2009, before for-profit telecom providers were widely offering it. EPB became the first company to sell gig-speed internet to an entire community network, Keel said. (Current monthly rate for 1-gig Wi-Fi: an envy-inducing $67.99.)

That fiber also improves the efficiency of the electric grid: EPB piggybacked on the fiber to upgrade its grid network to advanced metering infrastructure, which sends real-time information to the utility and allows it to respond instantly to issues. EPB won accolades for the number of “smart grid” automated devices on its high-voltage distribution system per mile or per customer, Keel said.

“EPB has been incredibly impressive and forward-thinking and on the leading edge — sometimes maybe even on the bleeding edge — of technology innovation, all in the spirit of working for the benefit of their customers,” said Matt Brown, regional vice president for the Tennessee Valley at Silicon Ranch, the major solar developer based in Nashville.

Silicon Ranch is working with EPB on a different kind of money-saving clean-energy project. A large-scale solar project in West Tennessee will produce 33 megawatts for EPB as part of TVA’s Generation Flexibility program, which lets local power companies generate up to 5% of their annual demand. The project is slated to be operating by mid-2028.

That solar development will be located outside EPB’s territory, where there’s more land available. So it won’t be able to help with local reliability in Chattanooga, the way that the community batteries do. But it will generate power at cheaper rates than those of TVA, which itself has cheaper rates than most U.S. utilities, meaning that EPB can pass those savings to its customers.

“Prices are going up on everything from food to energy to housing. This provides them comfort to be able to have some rate stability and flexibility,” Brown said.

On a dry, rocky patch of his family’s farm in Door County, Wisconsin, Dave Klevesahl grows wildflowers. But he has a vision for how to squeeze more value out of the plot: lease it to a company that wants to build a community solar array.

Unfortunately for Klevesahl, that is unlikely to happen under current state law. In Wisconsin, only utilities are allowed to develop such shared solar installations, which let households and businesses that can’t put panels on their own property access renewable energy via subscriptions.

Farmers, solar advocates, and legislators from both parties are trying to remove these restrictions through Senate Bill 559, which would allow the limited development of community solar by entities other than utilities.

Wisconsin lawmakers considered similar proposals in the 2021–2022 and 2023–2024 legislative sessions, with support from trade groups representing real estate agents, farmers, grocers, and retailers. But those bipartisan efforts failed in the face of opposition from the state’s powerful utilities and labor unions.

Community solar supporters are hoping for a different outcome this legislative session, which ends in March. But while the new bill, introduced Oct. 24, includes changes meant to placate utilities, the companies still firmly oppose it.

“I don’t really understand why anybody wouldn’t want community solar,” said Klevesahl, whose wife’s family has been farming their land for generations. In addition to leasing his land for an installation, he would like to subscribe to community solar, which typically saves participants money on their energy bills.

Some Wisconsin utilities do offer their own community solar programs. But they are too small to meet the demand for community solar, advocates say.

Around 20 states and Washington, D.C., have community solar programs that allow non-utility ownership of arrays. The majority of those states, including Wisconsin’s neighbor Illinois, have deregulated energy markets, in which the utilities that distribute electricity do not generate it.

In states with “vertically integrated” energy markets, like Wisconsin, utilities serve as regulated monopolies, both generating and distributing power. That means legislation is necessary to specify that other companies are also allowed to generate and sell power from community solar. Some vertically integrated states, including Minnesota, have passed such laws.

But monopoly utilities in those jurisdictions have consistently opposed community solar developed by third parties. Minnesota utility Xcel Energy, for example, supported terminating the state’s community solar program during an unsuccessful effort by some lawmakers last summer to end it.

The Wisconsin utilities We Energies and Madison Gas and Electric, according to their spokespeople, are concerned that customers who don’t subscribe to community solar will end up subsidizing costs for those who do. The utilities argue that because community solar subscribers have lower energy bills, they contribute less money for grid maintenance and construction, meaning that other customers must pay more to make up the difference. Clean-energy advocates, for their part, say this “cost shift” argument ignores research showing that the systemwide benefits of distributed energy like community solar can outweigh the expense.

The Wisconsin bill would also require utilities to buy power from community solar arrays that don’t have enough subscribers.

“This bill is being marketed as a ‘fair’ solution to advance renewables. It’s the opposite,” said We Energies spokesperson Brendan Conway. “It would force our customers to pay higher electricity costs by having them subsidize developers who want profit from a no-risk solar project. Under this bill, the developers avoid any risk. The costs of their projects will shift to and be paid for by all of our ‘non-subscribing’ customers.”

The power generated by community solar ultimately goes onto the utility’s grid, reducing the amount of electricity the utility needs to provide. But Conway said it’s not the most efficient way to meet overall demand.

“These projects would not be something we would plan for or need, so our customers would be paying for unneeded energy that benefits a very few,” he said. “Also, these credits are guaranteed by our other customers even if solar costs drop or grid needs change.”

Advocates in Wisconsin hope they can address such concerns and convince utilities to support community solar owned by third parties.

Beata Wierzba, government affairs director of the clean-power advocacy organization Renew Wisconsin, said her group and others “had an opportunity to talk with the utilities over the course of several months, trying to negotiate some language they could live with.”

“There were some exchanges where utilities gave us a dozen things that were problematic for them, and the coalition addressed them by making changes to the draft” of the bill, Wierzba said.

The spokespeople for We Energies and Madison Gas and Electric did not respond to questions about such conversations.

To assuage utilities’ concerns, the bill allows third-party companies to build community solar only for the next decade. The legislation also sets a statewide cap for community solar of 1.75 gigawatts, with limits for each of the five major investor-owned utilities’ territories proportionate to each utility’s total number of customers.

Community solar arrays would be limited to 5 megawatts, with exceptions for rooftops, brownfields, and other industrial sites, where 20 megawatts can be built.

No subscriber would be allowed to buy more than 40% of the output from a single community solar array, and 60% of the subscriptions must be for 40 kilowatts of capacity or less, the bill says. This is meant to prevent one large customer — like a big-box store or factory — from buying the majority of the power and excluding others from taking advantage of the limited community solar capacity.

Customers who subscribe to community solar would still have to pay at least $20 a month to their utility for service. The bill also contains what Wierzba called an “off-ramp”: After four years, the Public Service Commission of Wisconsin would study how the program is working and submit a report to the legislature, which could pass a new law to address any problems.

“The bill is almost like a small pilot project — it’s not like you’re opening the door and letting everyone come in,” said Wierzba. “You have a limit on how it can function, how many people can sign up.”

In Wisconsin, as in other states, developers hoping to build utility-scale solar farms on agricultural land face serious pushback. The Trump administration canceled federal incentives for solar arrays on farms this summer, with U.S. Department of Agriculture Secretary Brooke Rollins announcing, “USDA will no longer fund taxpayer dollars for solar panels on productive farmland.”

But Wisconsin farmers have argued that community solar can actually help keep agricultural land in production by providing an extra source of revenue. The Wisconsin Farm Bureau Federation has yet to weigh in on this year’s bill, but it supported previously proposed community solar legislation.

The bill calls for state regulators to come up with rules for community solar developers that would likely require dual use — meaning that crops or pollinator habitats are planted under and around the panels or that animals graze on the land. These increasingly common practices are known as agrivoltaics.

The bill would let local zoning bodies — rather than the state’s Public Service Commission — decide whether to permit a community solar installation.

Utility-scale solar farms, by contrast, are permitted at the state level, which can leave “locals feeling like they are not in control of their future,” said Matt Hargarten, vice president of government and public affairs for the Coalition for Community Solar Access. “This offers an alternative that is really welcome. If a town doesn’t want this to be there, it won’t be there.”

A 5-megawatt array typically covers 20 to 30 acres of land, whereas utility-scale solar farms are often hundreds of megawatts and span thousands of acres.

“You don’t need to upgrade the transmission systems with these small solar farms, because a 30-acre solar farm can backfeed into a substation that’s already there,” noted Klevesahl, a retired electrical engineer. “And then you’re using the power locally, and it’s clean power. Bottom line is, I just think it’s the right thing to do.”

The Trump administration has ordered the J.H. Campbell coal-fired power plant in Michigan to stay open for the next 90 days, citing an energy “emergency” that state utility regulators and regional grid operators say does not exist.

It’s the latest move in the administration’s expanding agenda to force aging and costly coal plants to keep running, despite warnings from energy experts and lawmakers that doing so will burden Americans with billions of dollars in unnecessary energy costs and environmental harms.

Tuesday’s emergency order from the Department of Energy was anticipated by Consumers Energy, the utility that owns and operates the 63-year-old facility. It’s the third such emergency order for the plant; the DOE issued its first directive in May, one week before the plant was scheduled to be retired, and then re-upped the decision in August.

State attorneys general are challenging the DOE’s authority to keep J.H. Campbell running. Environmental groups have also filed lawsuits to try to block the DOE’s emergency must-run orders for the J.H. Campbell plant, as well as similar directives for another fossil-fueled power plant in Pennsylvania. Opponents say the move is a blatant attempt to prop up a dying industry at the expense of everyday utility customers.

“The costs of unnecessarily running this jalopy coal plant just continue to mount. Coal is more expensive than modern resources like wind, solar, and batteries,” Michael Lenoff, a senior attorney with Earthjustice who’s leading litigation by nonprofits challenging the DOE’s stay-open orders, said in a Wednesday statement.

Consumers Energy reported in an October earnings call that the cost of keeping the plant running past its planned closure had added up to $80 million, which is more than $615,000 per day, from May to the end of September.

An analysis from the consultancy Grid Strategies found that a broad application of the DOE’s emergency authority to the more than 60 gigawatts of aging coal, gas, and oil-fueled power plants likely to face closure by 2028 could add nearly $6 billion in costs to U.S. consumers by the end of President Donald Trump’s second term.

In the emergency order issued late Tuesday night, Energy Secretary Chris Wright stated, “I hereby determine that an emergency exists in portions of the Midwest region of the United States due to a shortage of electric energy, a shortage of facilities for the generation of electricity, and other causes.” The DOE has authority under Section 202(c) of the Federal Power Act to order utilities regulated by states to keep plants running under emergency circumstances.

But that claim of an emergency is belied by analysis from Consumers Energy and Michigan utility regulators, who determined in 2022 that the plant’s closure would not threaten grid reliability and that replacing it with fossil gas, solar, and battery resources could save customers $600 million through 2040.

The Midcontinent Independent System Operator (MISO), the entity that manages the transmission grid supplying power to about 45 million people in 15 states, including Michigan, has also determined that the J.H. Campbell plant is not necessary to maintain grid reliability.

“Michigan’s Public Service Commission, grid experts, and the utility all agree it’s time for Campbell to go,” Margie Alt, director of the Climate Action Campaign, said in a Wednesday statement.

Those reliability analyses focused on the summer months, when MISO’s grid is under the greatest stress from demand for electricity to support air-conditioning during heat waves. MISO does not face a near-term reliability challenge in winter months at present, according to a winter readiness assessment issued in October.

Aging coal plants are less reliable and more prone to unplanned outages than more modern power plants, according to a recent analysis of data from the North American Electric Reliability Corporation conducted by the Environmental Defense Fund. Environmental Protection Agency data shows that the J.H. Campbell plant stopped generating for multiple stretches this summer and fall, indicating it may not be a reliable resource during emergencies, Lenoff noted in a November interview.

“Mandating aging, unreliable and costly coal plants to stay open past their retirement is a guaranteed way to needlessly hike up Americans’ electricity bills and make air pollution worse,” Ted Kelly, director and lead counsel for U.S. clean energy at Environmental Defense Fund, said in a Wednesday statement.

The DOE may soon issue Section 202(c) orders requiring two coal plants set for closure this year in Colorado to stay running, according to reports.

The U.S. Energy Information Administration in February reported about 8.1 gigawatts of coal-fired capacity scheduled to retire this year, including the 1.8-gigawatt Intermountain Power Project in Utah, the 670-megawatt Unit 2 of the TransAlta Centralia plant in Washington state, and 847 megawatts of generation capacity at the R.M. Schahfer plant in Indiana.

Lenoff warned that the Trump administration appears “ready to issue additional orders to prevent the long-planned retirement of some of the dirtiest and oldest coal-burning power plants in the U.S. That amounts to a corporate bailout of coal at our expense.”

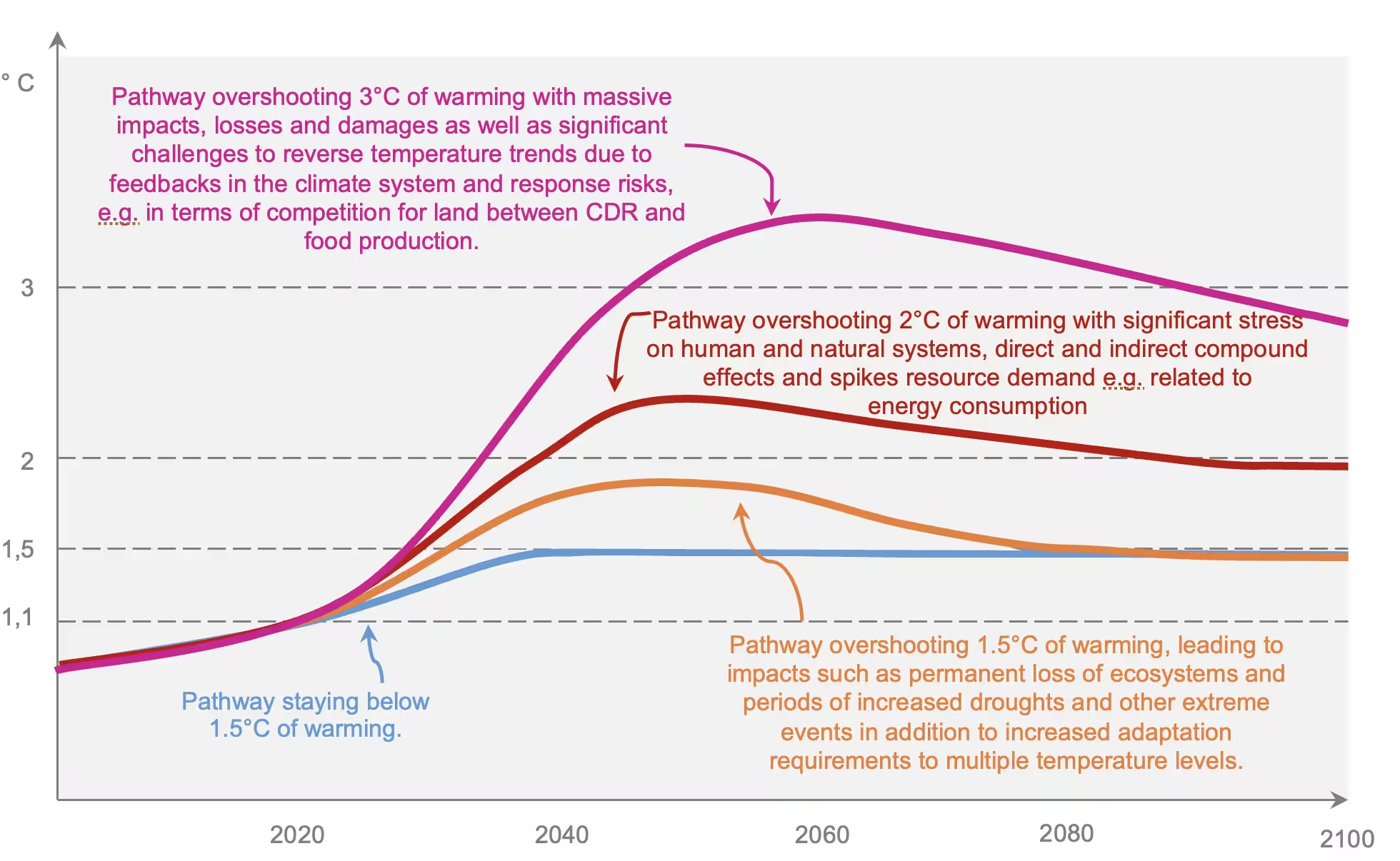

Climate change impacts of relevance for the humanitarian sector and beyond include (a) the timing of temperature exceedance, (b) the peak warming and (c) the duration of overshoot.

As global temperatures appear likely to surpass the Paris Agreement’s 1.5 °C target by 2050—and remain above it for decades—researchers warn that governments and aid organizations aren’t prepared for what this “climate overshoot” will mean for people and societies.

A perspective published today in PNAS Nexus suggests that while scientists have made progress describing overshoot’s physical impacts, its humanitarian and social consequences need greater focus. The authors call for urgent action to build the evidence, data and policy links needed to plan for decades of heightened, uneven climate risk before that window closes.

“Climate overshoot is no longer a distant possibility,” said lead author Andrew Kruczkiewicz, senior researcher at the Columbia Climate School’s National Center for Disaster Preparedness.

“Understanding how overshoot will affect people’s lives and livelihoods—before, during and after disasters—must be part of climate planning, policy and finance. To do otherwise is increasingly irresponsible, with the cost of doing nothing potentially creating tensions with the humanitarian principle of ‘do no harm.’”

The authors outline five interconnected factors linking Earth’s physical changes to social and humanitarian consequences.

Peak warming and duration. Even a short period of overshoot could lock in sea-level rise and other irreversible effects, while higher or longer warming peaks would magnify losses and strain the financial reserves that underpin recovery. Because systems like groundwater and ice sheets respond slowly to heat, some impacts could persist for centuries, even after temperatures stabilize.

Geography will shape who faces the greatest risks. The world won’t feel overshoot evenly: Regions warming faster than the global average are home to some of the world’s most socially and economically vulnerable populations. Limited capacity to prepare or recover means these communities may experience compounding crises, shifting where humanitarian needs are most acute and deepening inequities.

The timing of overshoot’s arrival. The rate of warming will determine whether systems can adapt in time. Rapid warming can outpace infrastructure and ecosystem adjustments, while slower change could allow for limited adaptation.

Adaptation limits and vulnerabilities. Overshoot could push some communities beyond the limits of feasible adaptation, creating cascading risks across food, water, health and energy systems. Implementing short-term fixes can lead to maladaptation, or solutions that solve one problem while creating others.

The path back down may bring new risks. Returning temperatures below 1.5 °C will require large-scale carbon dioxide removal, which could compete with agriculture, fisheries and livelihoods if these technologies repurpose land or ocean resources. Recovery paths with cycles of warming and cooling would also complicate disaster financing and planning. Policy discussions often fail to represent these variable recovery pathways, even though they would create different operational demands on the humanitarian sector.

To avoid widespread system strain, the authors stress the need for stronger links among climate science, social data and humanitarian operations. They recommend expanding research on the social and economic dimensions of overshoot, improving data from vulnerable areas and strengthening scenario planning that anticipates irreversible change and immediate threats.

“In a period of overshoot, the course our social and climate systems take will depend on how we respond to new and unpredictable shocks,” said coauthor Joshua Fisher, a Columbia University research scientist and director of the Columbia Climate School’s Advanced Consortium on Cooperation, Conflict, and Complexity.

“Because those shocks are difficult to predict, institutions will need stronger coordination and collaboration to stay flexible and respond effectively.”

Governments can reduce the risks associated with overshoot by accelerating emissions cuts to limit its magnitude and by investing in adaptation and early-warning systems that help communities cope with prolonged heat, food security pressures and other impacts. Without such foresight, countries could underestimate humanitarian needs or put resources toward responses that no longer fit future conditions.

Overshoot will also challenge how societies think about time and preparedness. Infrastructure designed for one hazard may prove inadequate for another, and the regions most at risk now may not be the same in the future. For example, a city that’s fortified its coast against storm surge could later face other types of flooding if rainfall patterns shift, demonstrating why adaptation approaches must evolve as conditions change.

Even if global efforts succeed in bringing temperatures below 1.5 °C, recovering from overshoot won’t be straightforward. How temperatures drop will matter as much as how high they rise—gradual cooling could ease adaptation pressures, but erratic cycles of warming and cooling could increase instability. Addressing this variability requires flexible approaches to risk reduction, disaster response, and infrastructure investment and closer coordination between climate and humanitarian planning.

The authors emphasize that meeting the Paris Agreement target is essential, yet warn we must also prepare for the possibility of temporarily exceeding it. Planning for the distinct phases of overshoot is not about accepting failure, but about anticipating change and protecting those most at risk.

The article’s coauthors are Zinta Zommers, Perry World House, University of Pennsylvania; Joyce Kimutai, Kenya Meteorological Service and Imperial College London; and Matthias Garschagen, Ludwig-Maximilians-Universität München.

Restrictions on solar and wind farms are proliferating around the country, with scores of local governments going as far as to forbid large-scale clean-energy developments.

Now, residents of an Ohio county are pushing back on one such ban on renewables — a move that could be a model for other places where clean energy faces severe restrictions.

Ohio has become a hot spot for anti-clean-energy rules. As of this fall, more than three dozen counties in the state have outlawed utility-scale solar in at least one of their townships.

In Richland County, the ban came this summer, when county commissioners voted to bar economically significant solar and wind projects in 11 of the county’s 18 townships. Almost immediately, residents formed a group called the Richland County Citizens for Property Rights and Job Development to try and reverse the stricture.

By September, they’d notched a crucial first victory, collecting enough signatures to put the issue on the ballot. Next May, when Ohioans head to the polls to vote in primary races, residents of Richland County will weigh in on a referendum that could ultimately reverse the ban. It’s the first time a county’s renewable-energy ban will be on the ballot in Ohio.

From the very beginning, “it was just a whirlwind,” said Christina O’Millian, a leader of the Richland County group. Like most others, she didn’t know a ban was under consideration until shortly before July 17, when the commission voted on it.

“We felt as constituents that we just hadn’t been heard,” O’Millian said. She views renewable energy as a way to attract more economic development to the county while reining in planet-warming greenhouse gas emissions.

Brian McPeek, another of the group’s leaders and a manager for the local chapter of the International Brotherhood of Electrical Workers, sees solar projects as huge job opportunities for the union’s members. “They provide a ton of work, a ton of man-hours.”

Many petition signers “didn’t want the commissioners to make that decision for them,” said Morgan Carroll, a county resident who helped gather signatures. “And there was a lot of respect for farmers having their own property rights” to decide whether to lease their land.

While the Ohio Power Siting Board retains general authority over where electricity generation is built, a 2021 state law known as Senate Bill 52 lets counties ban solar and wind farms in all or part of their territories. Meanwhile, Ohio law prevents local governments from blocking fossil-fuel or nuclear projects.

The Richland County community group is using a process under SB 52 to challenge the renewable-energy ban via referendum. Under that law, the organization had just 30 days from the commissioners’ vote to collect signatures in support of the ballot measure.

All told, more than 4,300 people signed the petition, though after the county Board of Elections rejected hundreds of signatures as invalid, the final count ended up at 3,380 — just 60 more than the required threshold of 8% of the number of votes in the last governor’s election.

Although the Richland County ban came as a surprise to many, it was months in the making.

In late January, Sharon Township’s zoning committee asked the county to forbid large wind and solar projects there. After discussion at their Feb. 6 meeting, the county commissioners wrote to all 18 townships in Richland to see if their trustees also wanted a ban. A draft fill-in-the-blanks resolution accompanied the letter.

Signed resolutions came back from 11 townships. The commissioners then took up the issue again on July 17.

Roughly two dozen residents came to the July meeting, and a majority of those who spoke on the proposal were against it. Commissioners deferred to the township trustees.

“The township trustees who were in favor of the prohibition strongly believe that they were representing the wishes of their residents, who are farming communities, who are not fans of seeing potential farmland being taken up for large wind and solar,” Commissioner Tony Vero told Canary Media.

He pointed out that the ban doesn’t cover the seven remaining townships and all municipal areas. “I just thought it was a pretty good compromise,” he said.

The concerns over putting solar panels or wind turbines on potential farmland echo land-use arguments that have long dogged rural clean-energy developments — and which have been elevated into federal policy by the Trump administration this year. Groups linked to the fossil-fuel industry have pushed these arguments in Ohio and beyond.

“It’s a false narrative that they care about prime farmland,” said Bella Bogin, director of programs for Ohio Citizen Action, which helped the Richland County group collect signatures to petition for the referendum. Income from leasing some land for renewable energy can help farmers keep property in their families, and plenty of acreage currently goes to growing crops for fuel — not food. “We can’t eat ethanol corn,” she added.

Under Ohio’s SB 52, counties — not townships — have the authority to issue blanket prohibitions over large solar and wind farms, with limited exceptions for projects already in the grid manager’s queue.

In Richland County’s case, the commissioners decided to defer to townships even though they didn’t have to.

The choice shows how SB 52 has led to “an inconsistently applied, informal framework that has created confusion about the roles of counties, townships, and the Ohio Power Siting Board,” said Chris Tavenor, general counsel for the Ohio Environmental Council. Under the law, “county commissioners should be carefully considering all the factors at play,” rather than deferring to townships.

Even without a restriction in place, SB 52 lets counties nix new solar or wind farms on a case-by-case basis before they’re considered by the Ohio Power Siting Board. And when projects do go to the state regulator, counties and townships appoint two ad hoc decision-makers who vote on cases with the rest of the board.

As electricity prices continue to rise across Ohio, Tavenor hopes the state’s General Assembly will reconsider SB 52, which he and other advocates say is unfairly restrictive toward solar and wind — two of the cheapest and quickest energy sources to deploy.

“Lawmakers should be looking to repeal it and make a system that actually responds to the problems facing our electric grid right now,” he said.

Commissioner Vero, for his part, said he has mixed feelings about the referendum.

“It’s America, and if there’s enough signatures to get on the ballot, more power to people,” he said. However, he objects to the fact that SB 52 allows voters countywide to sign the petition, even if they don’t live in one of the townships with a ban, and said he hopes the legislature will amend the law to prevent that from happening elsewhere.

Yet referendum supporters say the ban matters for the entire county.

“It affects everybody, whether you live in a city, a township, or a village,” McPeek said. As he sees it, restrictions will deter investment from not only companies that build wind and solar but also those that want to be able to access renewable energy. “To me, it just is bad for the county — the whole county, not just one or two townships.”

Renewable-energy projects also provide substantial amounts of tax revenue or similar PILOT payments for counties, helping fund schools and other local needs. “I think it’s important for my children to have more clean electric [energy] and all the opportunities that go along with having wind and solar,” Carroll said.

Now that the referendum is on the ballot, the Richland County group will work to build more support and get out the vote next spring. “Education and outreach in the community is basically what we’re going to focus on for the campaign coming up in the next few months,” O’Millian said.

“So now it goes to a countywide vote, and the population of the county gets to make that decision, instead of three guys,” McPeek said.

Surging power demand from new data centers is reaching unprecedented — and potentially unrealizable — heights.

Over the next five years, U.S. utilities expect to see new electricity demand equal to 15 times New York City’s peak load, the majority of which will come from data centers.

So finds a report released Tuesday by Grid Strategies tracking the growth in power demand for data centers being built and planned to feed tech giants’ artificial intelligence ambitions. The consultancy’s tally of utility load forecasts indicates that peak grid demand will boom to 166 gigawatts by 2030, a sixfold increase from what was forecast three years ago.

“These are just phenomenal numbers for an industry that was built over the past couple of decades to handle much lower load growth,” said John Wilson, Grid Strategies’ vice president and the report’s lead author.

Data centers make up roughly 90 gigawatts of that forecasted load growth, a reflection of the hundreds of billions of dollars that tech giants are pouring into AI, as well as smaller but still substantial investments in crypto-mining operations, enterprise cloud computing, telecommunications, and other IT services.

Back in late 2023, Grid Strategies offered an early warning about how data centers were causing power demand to spike. It has also cautioned that outsize plans for growth in key U.S. data center hot spots are threatening to exceed the physical capacity of power plants and power grids. These factors could result in higher costs for consumers and more carbon emissions as utilities plan to ramp up fossil-fuel use to serve new demand.

But Grid Strategies and others have also counseled that utilities might be exaggerating things.

For one, there’s lots of double-counting going on; data center developers often pitch the same project in multiple utility jurisdictions while searching for the best deal. For another, the gold-rush quality of the data center boom is leading developers to make speculative proposals for projects that may never materialize.

For its new report, Grid Strategies compared utility forecasts with alternative methods of projecting data center load growth, such as industry analysis of technological bottlenecks, and found that utilities may be overstating data center demand by as much as 40%.

That discrepancy indicates how utility forecasts need to better reflect underlying uncertainties, Wilson said. This is particularly important for the rising number of data centers being planned that will use a gigawatt or more of power — the equivalent of a small city’s total power demand.

“The fact that these facilities are city-sized is a huge deal,” Wilson said. “That has huge implications if these facilities get canceled, or they get built and don’t have long service lives.”

Utilities are using their sky-high forecasts to justify massive investments in power plants and grid infrastructure around the United States.

Those forecasts, in turn, have already driven up utility bills in some regions, including those for many of the more than 67 million people served by PJM Interconnection, the country’s biggest energy market. For PJM, future data center forecasts have driven capacity costs — the prices paid to power plants and other grid resources to meet peak grid demand — from $2.2 billion in 2023 to $14.7 billion in 2024 and to $16.1 billion in this summer’s capacity auction.

PJM customers in heavily impacted states like New Jersey are taking notice. Popular anger at rising bills helped propel Democratic gubernatorial candidates who pledged to combat increasing power costs to outsize wins in New Jersey and Virginia elections earlier this month.

Many utilities aim to meet this surging demand by building new fossil-gas-fired power plants, which could not only increase costs for customers but also slow down the transition to clean energy. Across much of the Southeast and the Midwest, in particular, utilities aim to build gigawatts’ worth of these power plants, which emit carbon dioxide as well as toxic air pollution.

In Virginia, home of the world’s largest data center hub, Dominion Energy is proposing gigawatts of new gas-fired power that, critics warn, could make it impossible for the utility to meet a state-mandated phaseout of fossil fuel use by 2045. The utility argues that the plants are needed to maintain reliability in the face of data center growth.

Meanwhile, in Georgia, major utility Georgia Power is seeking regulator permission to build gigawatts of gas-fired power capacity to meet load forecasts swollen by proposed data centers. Opponents fear that the plants will balloon already fast-rising utility bills, and this month voters overwhelmingly elected to the state’s Public Service Commission two Democratic challengers who ran on a platform of constraining unchecked utility spending.

Elsewhere, state lawmakers, regulators, and data center developers are seeking ways to accommodate growth without overwhelming the grid and utility customers.

In Texas, the country’s second-hottest data center market outside of Virginia, “large load” forecasts within the territory served by the Electric Reliability Council of Texas (ERCOT) have nearly quadrupled over the past year, representing a potential doubling of its peak demand. The state legislature passed a law this spring that requires new data centers to disconnect at moments of peak grid stress, although the rules for how that will happen are still being worked out by ERCOT and state regulators.

Other states are also passing laws and instituting regulations aimed at forcing data center developers to bear the cost of new power plants and grid infrastructure. And some data center companies are promising to shift when they use power in order to relieve peak grid strains that drive much of the costs that utilities face. PJM, for its part, is considering new rules aimed at requiring new data centers to reduce their impact on the region’s capacity costs, although consumer and environmental advocates say the grid operator’s proposed plans don’t go far enough.

The Grid Strategies report also highlights that U.S. utilities and grid operators haven’t yet committed to expanding the transmission grid at the scale needed to support the growing electrification of vehicles, buildings, and industries — however the data center demand plays out. “Even conservative growth trajectories outpace recent years and would require substantial grid expansion to accommodate,” it notes.

Ultimately, Wilson suggested that utilities, lawmakers, and regulators will need to make sure the cost of meeting whatever demand does materialize is not shifted to everyday customers.

“We’ve got a gigantic amount of additional load over the next five years to manage from a supply-chain, planning, and construction standpoint,” he said. “These are questions that regulators and intervenors should be asking, and not just trusting the utilities, who say, ‘This is the way we’ve always done it.’”

The data-center boom is pushing electricity costs to the breaking point for PJM Interconnection — the country’s biggest grid operator, serving more than 65 million people from the mid-Atlantic coast to Illinois — and that’s fueling a popular backlash.

Democratic gubernatorial candidates pledging to combat rising utility bills just won landslide victories in New Jersey and Virginia, two states bearing much of the brunt of data-center-driven cost increases. Congress members along with state governors and lawmakers are demanding that PJM take action.

PJM is poised to make a key decision this week on a fast-track process to get data centers online quickly while mitigating the impact of the facilities, which can use as much power as small cities. But a conflict has emerged over how far the grid operator can go. It boils down to this: Can PJM force data centers to stop using electricity at moments when demand for power peaks?

Data-center trade groups say no. But a growing number of politicians and environmental and consumer advocates say that requiring data centers to be the first to get disconnected from power during grid emergencies is the only surefire way to protect customers.

Last week, a bipartisan coalition of state legislators representing many of the 13 states served by PJM submitted its Protecting Ratepayers Proposal, which argues for data centers to be allowed to connect to PJM’s grid with the stipulation that they will be “‘interrupted’ during grid emergencies until they bring their own new supply.”

“We have a responsibility to ensure that technological growth doesn’t push vulnerable residents into financial hardship or enable a massive transfer of wealth from ratepayers to data centers,” said Maryland state Sen. Katie Fry Hester, a Democrat and organizer of the coalition, in a press release introducing the proposal.

“This proposal is about fairness and responsibility,” added Illinois state Sen. Rachel Ventura, also a Democrat. “We’re making sure data centers carry the cost of their own energy demands instead of passing it on to the public.”

That’s a salient concern, because the peak power needs of data centers are what’s driving electricity costs through the roof in PJM territory.

The grid operator must secure enough capacity from power plants and other resources to serve its peak loads. The prices of securing that capacity have skyrocketed in the past two years, from $2.2 billion in 2023 to $14.7 billion in 2024 and to $16.1 billion in PJM’s latest capacity auction this summer.

Growing demand forecasts of yet-to-be-built data centers are the primary culprit for these price spikes, and constitute the “core reliability issue facing PJM markets at present,” according to an August report from Monitoring Analytics, the company tasked with tracking PJM’s markets. “There is still time to address the issue but failure to do so will result in very high costs for other PJM customers,” the report warns.

Utility bills are rising across much of the U.S. due to a combination of factors, including volatile fossil-gas prices and the expense of repairing and expanding power grids. Data-center growth is not directly increasing costs in most regions yet, but in PJM, utility customers’ bills already reflect the capacity cost increases tied to serving future data centers.

Groups including consumer advocates in Maryland and the Natural Resources Defense Council agree that requiring new data centers to get cut off first during grid emergencies is a vital backstop to the suite of interventions PJM is considering for its fast-track process.

“We’re proposing to allow data centers to join PJM’s grid as fast as they want, but not guarantee them firm service, so they’ll be given interruptible service until they bring their own capacity,” Claire Lang-Ree, clean-energy advocate at the Natural Resources Defense Council and coauthor of the environmental group’s proposal, explained during an Oct. 22 webinar. “We think that’ll solve both the cost and reliability problem, because by removing all these large loads out of the capacity market until they bring their own supply, … capacity prices might go back down to historic levels.”

PJM’s Members Committee is expected to vote Wednesday on a final advisory recommendation to send to the grid operator’s board of managers. PJM has said it intends to file a proposal in December with the Federal Energy Regulatory Commission, in hopes of gaining approval to institute changes in 2026.

Data-center companies and utilities are not happy about mandatory power cutoffs for new computing facilities, however — and their arguments have so far carried the day at PJM.

In August, PJM issued a “conceptual proposal” that included a “non-capacity-backed load” (NCBL) structure. The approach would force loads of 50 megawatts or larger to curtail power use to forestall grid emergencies as a precondition to interconnection.

That proposal was lambasted by the Data Center Coalition, a trade group that includes Google, Microsoft, Meta, Amazon, and dozens of other companies that own, operate, or lease data-center capacity. In comments to PJM, the coalition warned that by imposing NCBL status on data centers, the grid operator “risks exceeding its jurisdictional authority” over customer interconnection and interruptibility status, which are generally managed by utilities regulated at the state level.

“PJM has not provided a defensible rationale for creating this new class of service, and on its face the proposal is unduly discriminatory,” the coalition wrote.

PJM responded by pulling the NCBL concept from its next round of proposals, instead offering new data centers a voluntary method to commit to curtailing their peak power use through tweaks to a structure called “price-responsive demand,” or PRD.

As PJM explained in an October update, “With these changes, PRD becomes similar to voluntary NCBL,” since data centers that opt in would be exempt from paying for capacity but be obligated to “reduce demand during stressed system conditions.”

The big question is if data-center developers will choose to act at the scale required to “move the needle,” as analytics firm ClearView Energy Partners put it in a November research note. The authors wrote that, according to their observations in recent PJM meetings, “it’s far from clear whether new large load[s] would take service via this voluntary program.”

Consumer advocates aren’t happy with the data-center industry’s resistance to mandatory controls. Clara Summers, campaign manager for the Citizens Utility Board, an Illinois-based consumer-advocacy group, told Canary Media that the Data Center Coalition’s positions “are generally disappointing, given how some individual members of the DCC have shown a willingness to hammer out decent solutions that actually take responsibility for their own costs.”

Summers is referring to a handful of efforts by tech giants and data-center developers to use their own capacity resources to reduce their grid impacts. One such rare example is an agreement Google reached in August with PJM utility Indiana Michigan Power that commits the tech giant to bringing additional new capacity online and lowering power use during times of peak demand to alleviate the impacts of expanding a massive data center in Fort Wayne, Indiana.

Most of the groups submitting proposals to PJM agree that its new rules should enable data centers to fast-track development by paying for generation and other capacity resources to serve their own needs. Stakeholders also agree that data centers that can use less power during times of peak demand should be rewarded for the relief that would provide to PJM’s system.

The Data Center Coalition has also won backing from the governors of Maryland, New Jersey, Pennsylvania, and Virginia, four states in PJM territory courting data centers for economic development. Those governors joined the coalition in submitting a proposal for the fast-track process that would task state regulators with expediting interconnection for data centers that can add enough new generation capacity to the grid to cover their energy demand at the time they are connected.

But groups arguing for mandatory restrictions say these alternatives may not take effect quickly enough to prevent data-center growth from outpacing the capacity of PJM’s grid.

PJM’s notoriously backlogged interconnection queue is impeding the addition of new power plants to the system. The grid operator’s efforts to fast-track new generation resources have yielded only a handful of projects expected to come online before 2030.

PJM is still in the early stages of developing options to add capacity to existing generators, such as pairing batteries with solar and wind farms. And proposals that let data-center developers tap into the flexibility of virtual power plants remain a work in progress.

Meanwhile, PJM’s grid is only just beginning to feel the pressures of data-center expansion. The latest forecasts of large-load growth across PJM territory show 32 gigawatts of additional demand by 2028 and about 60 gigawatts by 2030, or a 37% increase from PJM’s peak load today, according to the Maryland Office of People’s Counsel, the state’s consumer advocate.

The sheer scale of proposed data-center construction beggars belief. To meet that projected demand, “by 2028, [developers] would have to be investing about $1 trillion within PJM in the next three to four years,” David Lapp, who leads the Maryland office, said during a press conference last month. “That’s an insane amount of money.”

Many groups are arguing to keep price caps on PJM’s capacity auction in place to mitigate the pass-through costs of rising data-center demand. They’re also pushing for PJM to order utilities to more stringently clear their load forecasts of speculative or redundant data-center applications, which experts agree are inflating expectations of how much load utilities and grid operators will have to serve.

But utilities, power-plant owners, data-center developers, and the tech giants spurring the AI boom have little reason to constrain these outsized growth plans, or to concede to restrictions on their peak power use, Lapp said. These are “some of the most powerful corporations in the world, all increasing their bottom line on the backs of existing customers,” he said.

As the 20th century ended, the National Academy of Engineering chose the top 20 engineering achievements of the past 100 years. At the top of the list was electrification, which beat out space travel, automobiles, computers, and the internet.

The 21st century may also be defined by electricity. The future unfolding before our eyes — from advances in artificial intelligence (AI) and automation to the electrification of transportation — depends on vast and growing quantities of electricity. The International Energy Agency (IEA) expects global electricity consumption to grow by nearly 4% annually through 2027 and declared the world is entering a “new Age of Electricity.”

With the world increasingly dependent on electricity, grid resilience is essential. Unfortunately, threats to grid resilience are quickly growing in both volume and seriousness. Extreme weather events, for instance, are now more frequent and powerful. The U.S. experienced an average of 23 natural disasters causing at least $1 billion in damages each year between 2020 and 2024, compared with just nine per year over the prior three decades.

Other challenges to a resilient grid include the influx of distributed energy resources (DER), such as rooftop solar, energy storage, and electric vehicle (EV) charging, which can create two-way power flows, overload local feeders, and cause voltage fluctuations that strain grids. As the volume of DERs has spiked, so too has the threat from cybercriminals, who take advantage of the increased attack surface that so many grid-connected assets provide. The number of cyberattacks on U.S. utilities increased by 70% from 2024 to 2023.

The avalanche of threats to the grid was enough for the North American Electric Reliability Corporation President Jim Robb to warn of a “five-alarm-fire” for grid reliability. And when the grid is not resilient to growing threats, there are real-world consequences.

Between 2000 and 2023, for example, 80% of all major power outages were due to weather — primarily extreme weather including severe winds and thunderstorms, winter storms, and hurricanes. A recent study published in the journal Nature Communications found that one-, three-, and 14-day power interruptions reduce GDP in the area impacted by $1.8 billion, $3.7 billion, and $15.2 billion, respectively.

Utilities understand the importance of a resilient grid and have long been focused on improving their System Average Interruption Duration Index (SAIDI) and System Average Interruption Frequency Index (SAIFI) scores. However, the existing tools and approaches to resilience planning and operations are inadequate to today’s challenges. Siloed outage management systems (OMS), supervisory control and data acquisition (SCADA) systems, and geographic information systems (GIS) combined with advanced metering infrastructure (AMI) data, static studies, and limited DER visibility result in fragmented, slow, and ultimately inadequate approaches to resilience.

A modern approach to grid resilience

The key paradigm shift that utilities need to make is to go from imprecise and reactive resilience strategies to proactive planning driven by full grid visibility and sophisticated data analysis. Software platforms with access to OMS, SCADA, GIS, and other utility data sources make that shift possible by providing a foundation for a comprehensive analysis, which is impossible to do when information is siloed.

With comprehensive data, software can perform three types of analysis that are essential to grid resilience in today’s complex environment:

The best software platforms don’t just identify risks and vulnerabilities. They also translate results from analysis into recommendations for improved resilience through infrastructure investments, operational changes, demand response, strategic DER deployment, and other measures.

Transmission and distribution system operators worldwide face an increasingly common resilience challenge. “We are seeing massive increases in load from the electrification of vehicles and heating and cooling systems, as well as from data centers, coupled with increased variability from renewable energy. This is creating a greater need for analytical and optimization software solutions,” said John Dirkman, vice president of product management at Resource Innovations, whose Grid360 software platform provides contingency, sensitivity, forecasting, and critical-load analysis to help utilities understand and address resilience challenges.

Recently, a large utility in Europe worked with Resource Innovations to model various loading scenarios, including what would happen if 10% or more EVs began charging in specific neighborhoods. The analysis identified where the added EVs would strain transformers and feeders and then produced a system heat map showing where the grid would be most vulnerable.

The software also provided recommendations to address potential problems. It analyzed the utility’s urban distribution system, where much of the infrastructure is underground and grid upgrades would be expensive and disruptive. The analysis suggested alternative actions to provide increased flexibility: demand response to reduce peak load, battery storage or vehicle-to-grid capabilities to provide localized system backup, and strategic placement of switches and power electronic devices to shift load between feeders.

While utilities around the world face different specific threats to grid resilience, the value that software can deliver in analyzing the grid for risks and suggesting tangible action is similar. For instance, in wildfire-prone regions, software allows utilities to systematically assess vulnerability by overlaying grid networks onto topographical and fire-potential maps.

This highlights the transmission and distribution lines that face the highest wildfire risk — in places like California, that is often in difficult-to-access canyon areas. Contingency analysis provides valuable intelligence, such as alternative routes if a line goes down during a fire and how much load those backup options can serve. Analysis can also suggest where backup generation or storage is most needed.

One example of software that does scenario planning by integrating data from multiple utility systems to model the grid under stressed conditions is Resource Innovations’ Grid360 Grid Impact Assessment System (GIAS). The web-based platform allows utilities to simulate everything from wildfire impacts to DER integration challenges and cyberattacks. This provides system planners and operators with real-time visualization and forecasting tools to prevent predicted problems with voltage, loading, and power quality before they occur. Grid360 GIAS also integrates with Resource Innovations’ iEnergy platform for interconnection, demand-side management, and demand response, allowing utilities to coordinate both infrastructure investments and customer-side resources to strengthen resilience.

“Our software allows the utility to model the grid and find ways to provide power under any scenario,” Dirkman said. “That kind of planning can be done either on a very specific location basis or network-wide.”

“Our software allows the utility to model the grid and find ways to provide power under any scenario. That kind of planning can be done either on a very specific location basis or network-wide.”

John Dirkman, Vice President of Product Management at Resource Innovations

There is little chance that threats to grid resilience will diminish in the future, and the consequences of inadequate resilience can be measured in billions of dollars and growing risks to public safety. It is not a time to rely on reactive approaches. Software platforms that analyze unique vulnerabilities and recommend solutions give utilities the tools they need to act proactively and ensure the grid remains reliable as the world enters its new age of electricity.